-

Identifying Strategies to Mitigate Cybersickness in Virtual Reality Induced by Flying with an Interactive Travel Interface

Identifying Strategies to Mitigate Cybersickness in Virtual Reality Induced by Flying with an Interactive Travel Interface -

Need for UAI–Anatomy of the Paradigm of Usable Artificial Intelligence for Domain-Specific AI Applicability

Need for UAI–Anatomy of the Paradigm of Usable Artificial Intelligence for Domain-Specific AI Applicability -

EEG Correlates of Distractions and Hesitations in Human–Robot Interaction: A LabLinking Pilot Study

EEG Correlates of Distractions and Hesitations in Human–Robot Interaction: A LabLinking Pilot Study -

The Good News, the Bad News, and the Ugly Truth: A Review on the 3D Interaction of Light Field Displays

The Good News, the Bad News, and the Ugly Truth: A Review on the 3D Interaction of Light Field Displays

Journal Description

Multimodal Technologies and Interaction

Multimodal Technologies and Interaction

is an international, scientific, peer-reviewed, open access journal of multimodal technologies and interaction published monthly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), Inspec, dblp Computer Science Bibliography, and other databases.

- Journal Rank: CiteScore - Q2 (Computer Science Applications)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 17.1 days after submission; acceptance to publication is undertaken in 4.8 days (median values for papers published in this journal in the first half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Impact Factor:

2.5 (2022)

Latest Articles

Multimodal Interaction for Cobot Using MQTT

Multimodal Technol. Interact. 2023, 7(8), 78; https://doi.org/10.3390/mti7080078 - 03 Aug 2023

Abstract

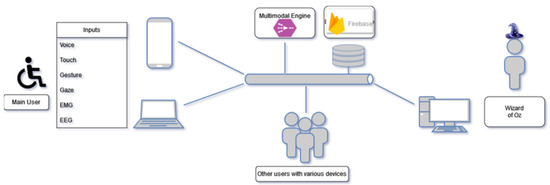

For greater efficiency, human–machine and human–robot interactions must be designed with the idea of multimodality in mind. To allow the use of several interaction modalities, such as the use of voice, touch, gaze tracking, on several different devices (computer, smartphone, tablets, etc.) and

[...] Read more.

For greater efficiency, human–machine and human–robot interactions must be designed with the idea of multimodality in mind. To allow the use of several interaction modalities, such as the use of voice, touch, gaze tracking, on several different devices (computer, smartphone, tablets, etc.) and to integrate possible connected objects, it is necessary to have an effective and secure means of communication between the different parts of the system. This is even more important with the use of a collaborative robot (cobot) sharing the same space and very close to the human during their tasks. This study present research work in the field of multimodal interaction for a cobot using the MQTT protocol, in virtual (Webots) and real worlds (ESP microcontrollers, Arduino, IOT2040). We show how MQTT can be used efficiently, with a common publish/subscribe mechanism for several entities of the system, in order to interact with connected objects (like LEDs and conveyor belts), robotic arms (like the Ned Niryo), or mobile robots. We compare the use of MQTT with that of the Firebase Realtime Database used in several of our previous research works. We show how a “pick–wait–choose–and place” task can be carried out jointly by a cobot and a human, and what this implies in terms of communication and ergonomic rules, via health or industrial concerns (people with disabilities, and teleoperation).

Full article

(This article belongs to the Special Issue Feature Papers in Multimodal Technologies and Interaction—Edition 2023)

►

Show Figures

Open AccessArticle

Enhancing Object Detection for VIPs Using YOLOv4_Resnet101 and Text-to-Speech Conversion Model

Multimodal Technol. Interact. 2023, 7(8), 77; https://doi.org/10.3390/mti7080077 - 02 Aug 2023

Abstract

Vision impairment affects an individual’s quality of life, posing challenges for visually impaired people (VIPs) in various aspects such as object recognition and daily tasks. Previous research has focused on developing visual navigation systems to assist VIPs, but there is a need for

[...] Read more.

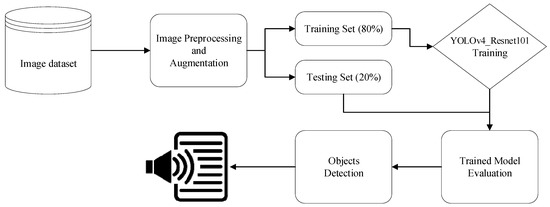

Vision impairment affects an individual’s quality of life, posing challenges for visually impaired people (VIPs) in various aspects such as object recognition and daily tasks. Previous research has focused on developing visual navigation systems to assist VIPs, but there is a need for further improvements in accuracy, speed, and inclusion of a wider range of object categories that may obstruct VIPs’ daily lives. This study presents a modified version of YOLOv4_Resnet101 as backbone networks trained on multiple object classes to assist VIPs in navigating their surroundings. In comparison to the Darknet, with a backbone utilized in YOLOv4, the ResNet-101 backbone in YOLOv4_Resnet101 offers a deeper and more powerful feature extraction network. The ResNet-101’s greater capacity enables better representation of complex visual patterns, which increases the accuracy of object detection. The proposed model is validated using the Microsoft Common Objects in Context (MS COCO) dataset. Image pre-processing techniques are employed to enhance the training process, and manual annotation ensures accurate labeling of all images. The module incorporates text-to-speech conversion, providing VIPs with auditory information to assist in obstacle recognition. The model achieves an accuracy of 96.34% on the test images obtained from the dataset after 4000 iterations of training, with a loss error rate of 0.073%.

Full article

(This article belongs to the Topic Interactive Artificial Intelligence and Man-Machine Communication)

►▼

Show Figures

Figure 1

Open AccessSystematic Review

How Is Privacy Behavior Formulated? A Review of Current Research and Synthesis of Information Privacy Behavioral Factors

Multimodal Technol. Interact. 2023, 7(8), 76; https://doi.org/10.3390/mti7080076 - 29 Jul 2023

Abstract

What influences Information Communications and Technology (ICT) users’ privacy behavior? Several studies have shown that users state to care about their personal data. Contrary to that though, they perform unsafe privacy actions, such as ignoring to configure privacy settings. In this research, we

[...] Read more.

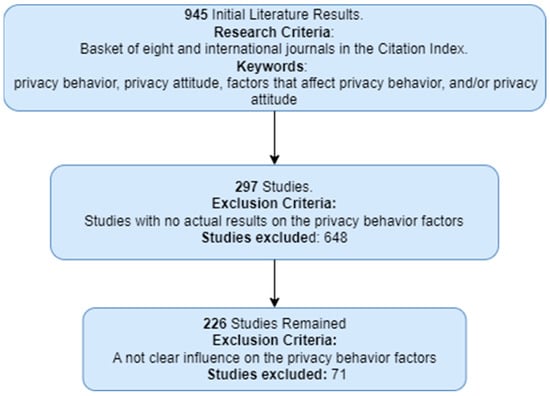

What influences Information Communications and Technology (ICT) users’ privacy behavior? Several studies have shown that users state to care about their personal data. Contrary to that though, they perform unsafe privacy actions, such as ignoring to configure privacy settings. In this research, we present the results of an in-depth literature review on the factors affecting privacy behavior. We seek to investigate the underlying factors that influence individuals’ privacy-conscious behavior in the digital domain, as well as effective interventions to promote such behavior. Privacy decisions regarding the disclosure of personal information may have negative consequences on individuals’ lives, such as becoming a victim of identity theft, impersonation, etc. Moreover, third parties may exploit this information for their own benefit, such as targeted advertising practices. By identifying the factors that may affect SNS users’ privacy awareness, we can assist in creating methods for effective privacy protection and/or user-centered design. Examining the results of several research studies, we found evidence that privacy behavior is affected by a variety of factors, including individual ones (e.g., demographics) and contextual ones (e.g., financial exchanges). We synthesize a framework that aggregates the scattered factors that have been found in the literature to affect privacy behavior. Our framework can be beneficial to academics and practitioners in the private and public sectors. For example, academics can utilize our findings to create specialized information privacy courses and theoretical or laboratory modules.

Full article

(This article belongs to the Special Issue Feature Papers in Multimodal Technologies and Interaction—Edition 2023)

►▼

Show Figures

Figure 1

Open AccessArticle

An Enhanced Diagnosis of Monkeypox Disease Using Deep Learning and a Novel Attention Model Senet on Diversified Dataset

Multimodal Technol. Interact. 2023, 7(8), 75; https://doi.org/10.3390/mti7080075 - 27 Jul 2023

Abstract

►▼

Show Figures

With the widespread of Monkeypox and increase in the weekly reported number of cases, it is observed that this outbreak continues to put the human beings in risk. The early detection and reporting of this disease will help monitoring and controlling the spread

[...] Read more.

With the widespread of Monkeypox and increase in the weekly reported number of cases, it is observed that this outbreak continues to put the human beings in risk. The early detection and reporting of this disease will help monitoring and controlling the spread of it and hence, supporting international coordination for the same. For this purpose, the aim of this paper is to classify three diseases viz. Monkeypox, Chikenpox and Measles based on provided image dataset using trained standalone DL models (InceptionV3, EfficientNet, VGG16) and Squeeze and Excitation Network (SENet) Attention model. The first step to implement this approach is to search, collect and aggregate (if require) verified existing dataset(s). To the best of our knowledge, this is the first paper which has proposed the use of SENet based attention models in the classification task of Monkeypox and also targets to aggregate two different datasets from distinct sources in order to improve the performance parameters. The unexplored SENet attention architecture is incorporated with the trunk branch of InceptionV3 (SENet+InceptionV3), EfficientNet (SENet+EfficientNet) and VGG16 (SENet+VGG16) and these architectures improve the accuracy of the Monkeypox classification task significantly. Comprehensive experiments on three datasets depict that the proposed work achieves considerably high results with regard to accuracy, precision, recall and F1-score and hence, improving the overall performance of classification. Thus, the proposed research work is advantageous in enhanced diagnosis and classification of Monkeypox that can be utilized further by healthcare experts and researchers to confront its outspread.

Full article

Figure 1

Open AccessArticle

The Impact of Mobile Learning on Students’ Attitudes towards Learning in an Educational Technology Course

by

and

Multimodal Technol. Interact. 2023, 7(7), 74; https://doi.org/10.3390/mti7070074 - 20 Jul 2023

Abstract

►▼

Show Figures

As technology has explosively and globally revolutionized the teaching and learning processes at educational institutions, enormous and innovative technological developments, along with their tools and applications, have recently invaded the education system. Using mobile learning (m-learning) employs wireless technologies for thinking, communicating, learning,

[...] Read more.

As technology has explosively and globally revolutionized the teaching and learning processes at educational institutions, enormous and innovative technological developments, along with their tools and applications, have recently invaded the education system. Using mobile learning (m-learning) employs wireless technologies for thinking, communicating, learning, and sharing to disseminate and exchange knowledge. Consequently, assessing the learning attitudes of students toward mobile learning is crucial, as learning attitudes impact their motivation, performance, and beliefs about mobile learning. However, mobile learning seems under-researched and may require additional efforts from researchers, especially in the context of the Middle East. Hence, this study’s contribution is enhancing our knowledge about students’ attitudes towards mobile-based learning. Therefore, the study goal was to investigate m-learning’s effect on the learning attitudes among technology education students. An explanatory sequential mixed approach was utilized to examine the attitudes of 50 students who took an educational technology class. A quasi-experiment was conducted and a phenomenological approach was adopted. Data from the experimental group and the control group were gathered. Focus group discussions with three groups and 25 semi-structured interviews were performed with students who experienced m-learning in their course. ANCOVA was conducted and revealed the impact of m-learning on the attitudes and their components. An inductive and deductive content analysis was conducted. Eleven subthemes stemmed out of three main themes. These subthemes included: personalized learning, visualization of learning motivation, less learning frustration, enhancing participation, learning on familiar devices, and social interaction, which emerged from the data. The researchers recommended that higher education institutions adhere to a set of guiding principles when creating m-learning policies. Additionally, they should customize the m-learning environment with higher levels of interactivity to meet students’ needs and learning styles to improve their attitudes towards m-learning.

Full article

Figure 1

Open AccessReview

Encoding Variables, Evaluation Criteria, and Evaluation Methods for Data Physicalisations: A Review

Multimodal Technol. Interact. 2023, 7(7), 73; https://doi.org/10.3390/mti7070073 - 18 Jul 2023

Abstract

►▼

Show Figures

Data physicalisations, or physical visualisations, represent data physically, using variable properties of physical media. As an emerging area, Data physicalisation research needs conceptual foundations to support thinking about and designing new physical representations of data and evaluating them. Yet, it remains unclear at

[...] Read more.

Data physicalisations, or physical visualisations, represent data physically, using variable properties of physical media. As an emerging area, Data physicalisation research needs conceptual foundations to support thinking about and designing new physical representations of data and evaluating them. Yet, it remains unclear at the moment (i) what encoding variables are at the designer’s disposal during the creation of physicalisations, (ii) what evaluation criteria could be useful, and (iii) what methods can be used to evaluate physicalisations. This article addresses these three questions through a narrative review and a systematic review. The narrative review draws on the literature from Information Visualisation, HCI and Cartography to provide a holistic view of encoding variables for data. The systematic review looks closely into the evaluation criteria and methods that can be used to evaluate data physicalisations. Both reviews offer a conceptual framework for researchers and designers interested in designing and evaluating data physicalisations. The framework can be used as a common vocabulary to describe physicalisations and to identify design opportunities. We also proposed a seven-stage model for designing and evaluating physical data representations. The model can be used to guide the design of physicalisations and ideate along the stages identified. The evaluation criteria and methods extracted during the work can inform the assessment of existing and future data physicalisation artefacts.

Full article

Figure 1

Open AccessArticle

Experiencing Authenticity of the House Museums in Hybrid Environments

by

and

Multimodal Technol. Interact. 2023, 7(7), 72; https://doi.org/10.3390/mti7070072 - 18 Jul 2023

Abstract

The paper presents an existing scenario related to the advanced integration of digital technologies in the field of house museums, based on the critical literature and applied experimentation. House museums are a particular type of heritage site, in which is highlighted the tension

[...] Read more.

The paper presents an existing scenario related to the advanced integration of digital technologies in the field of house museums, based on the critical literature and applied experimentation. House museums are a particular type of heritage site, in which is highlighted the tension between the evocative capacity of the spaces and the requirements for preservation. In this dimension, the use of a seamless approach amplifies the atmospheric component of the space, superimposing, through hybrid digital technologies, an interactive, context-driven layer in an open dialogue between digital and physical. The methodology moves on the one hand from the literature review, framing the macro themes of research, and on the other from the overview of case studies, selected on the basis of the experiential value of the space. The analysis of the selected cases followed as criteria: the formal dimension of the technology; the narrative plot, as storytelling of socio-cultural atmosphere or identification within the intimate story; and the involvement of visitors as individual immersion or collective rituality. The paper aimed at outlining a developmental panorama in which the integration of hybrid technologies points to a new seamless awareness within application scenarios as continuous and work-in-progress challenges.

Full article

(This article belongs to the Special Issue Critical Reflections on Digital Humanities and Cultural Heritage)

►▼

Show Figures

Figure 1

Open AccessArticle

Would You Hold My Hand? Exploring External Observers’ Perception of Artificial Hands

Multimodal Technol. Interact. 2023, 7(7), 71; https://doi.org/10.3390/mti7070071 - 17 Jul 2023

Abstract

Recent technological advances have enabled the development of sophisticated prosthetic hands, which can help their users to compensate lost motor functions. While research and development has mostly addressed the functional requirements and needs of users of these prostheses, their broader societal perception (e.g.,

[...] Read more.

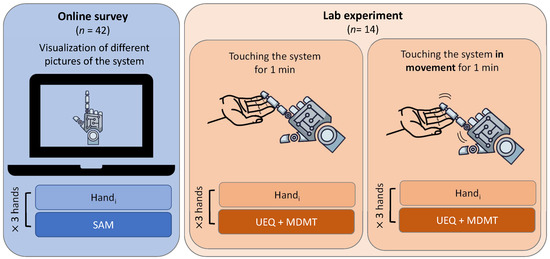

Recent technological advances have enabled the development of sophisticated prosthetic hands, which can help their users to compensate lost motor functions. While research and development has mostly addressed the functional requirements and needs of users of these prostheses, their broader societal perception (e.g., by external observers not affected by limb loss themselves) has not yet been thoroughly explored. To fill this gap, we investigated how the physical design of artificial hands influences the perception by external observers. First, we conducted an online study (n = 42) to explore the emotional response of observers toward three different types of artificial hands. Then, we conducted a lab study (n = 14) to examine the influence of design factors and depth of interaction on perceived trust and usability. Our findings indicate that some design factors directly impact the trust individuals place in the system’s capabilities. Furthermore, engaging in deeper physical interactions leads to a more profound understanding of the underlying technology. Thus, our study shows the crucial role of the design features and interaction in shaping the emotions around, trust in, and perceived usability of artificial hands. These factors ultimately impact the overall perception of prosthetic systems and, hence, the acceptance of these technologies in society.

Full article

(This article belongs to the Special Issue Challenges in Human-Centered Robotics)

►▼

Show Figures

Figure 1

Open AccessArticle

Exploring the Educational Value and Impact of Vision-Impairment Simulations on Sympathy and Empathy with XREye

Multimodal Technol. Interact. 2023, 7(7), 70; https://doi.org/10.3390/mti7070070 - 06 Jul 2023

Abstract

To create a truly accessible and inclusive society, we need to take the more than 2.2 billion people with vision impairments worldwide into account when we design our cities, buildings, and everyday objects. This requires sympathy and empathy, as well as a certain

[...] Read more.

To create a truly accessible and inclusive society, we need to take the more than 2.2 billion people with vision impairments worldwide into account when we design our cities, buildings, and everyday objects. This requires sympathy and empathy, as well as a certain level of understanding of the impact of vision impairments on perception. In this study, we explore the potential of an extended version of our vision-impairment simulation system XREye to increase sympathy and empathy and evaluate its educational value in an expert study with 56 educators and education students. We include data from a previous study in related work on sympathy and empathy as a baseline for comparison with our data. Our results show increased sympathy and empathy after experiencing XREye and positive feedback regarding its educational value. Hence, we believe that vision-impairment simulations, such as XREye, have merit to be used for educational purposes in order to increase awareness for the challenges people with vision impairments face in their everyday lives.

Full article

(This article belongs to the Special Issue Feature Papers in Multimodal Technologies and Interaction—Edition 2023)

►▼

Show Figures

Figure 1

Open AccessArticle

Exploring Learning Curves in Acupuncture Education Using Vision-Based Needle Tracking

Multimodal Technol. Interact. 2023, 7(7), 69; https://doi.org/10.3390/mti7070069 - 06 Jul 2023

Abstract

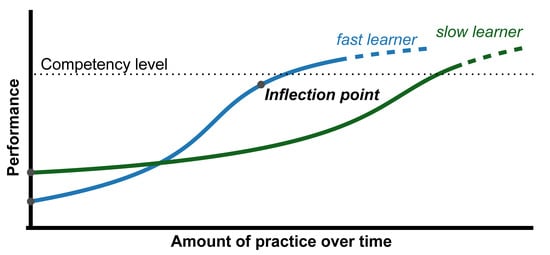

Measuring learning curves allows for the inspection of the rate of learning and competency threshold for each individual, training lesson, or training method. In this work, we investigated learning curves in acupuncture needle manipulation training with continuous performance measurement using a vision-based needle

[...] Read more.

Measuring learning curves allows for the inspection of the rate of learning and competency threshold for each individual, training lesson, or training method. In this work, we investigated learning curves in acupuncture needle manipulation training with continuous performance measurement using a vision-based needle training system. We tracked the needle insertion depth of 10 students to investigate their learning curves. The results show that the group-level learning curve was fitted with the Thurstone curve, indicating that students were able to improve their needle insertion skills after repeated practice. Additionally, the analysis of individual learning curves revealed valuable insights into the learning experiences of each participant, highlighting the importance of considering individual differences in learning styles and abilities when designing training programs.

Full article

(This article belongs to the Special Issue Feature Papers in Multimodal Technologies and Interaction—Edition 2023)

►▼

Show Figures

Figure 1

Open AccessArticle

Using Open-Source Automatic Speech Recognition Tools for the Annotation of Dutch Infant-Directed Speech

Multimodal Technol. Interact. 2023, 7(7), 68; https://doi.org/10.3390/mti7070068 - 03 Jul 2023

Abstract

There is a large interest in the annotation of speech addressed to infants. Infant-directed speech (IDS) has acoustic properties that might pose a challenge to automatic speech recognition (ASR) tools developed for adult-directed speech (ADS). While ASR tools could potentially speed up the

[...] Read more.

There is a large interest in the annotation of speech addressed to infants. Infant-directed speech (IDS) has acoustic properties that might pose a challenge to automatic speech recognition (ASR) tools developed for adult-directed speech (ADS). While ASR tools could potentially speed up the annotation process, their effectiveness on this speech register is currently unknown. In this study, we assessed to what extent open-source ASR tools can successfully transcribe IDS. We used speech data from 21 Dutch mothers reading picture books containing target words to their 18- and 24-month-old children (IDS) and the experimenter (ADS). In Experiment 1, we examined how the ASR tool Kaldi-NL performs at annotating target words in IDS vs. ADS. We found that Kaldi-NL only found 55.8% of target words in IDS, while it annotated 66.8% correctly in ADS. In Experiment 2, we aimed to assess the difficulties in annotating IDS more broadly by transcribing all IDS utterances manually and comparing the word error rates (WERs) of two different ASR systems: Kaldi-NL and WhisperX. We found that WhisperX performs significantly better than Kaldi-NL. While there is much room for improvement, the results show that automatic transcriptions provide a promising starting point for researchers who have to transcribe a large amount of speech directed at infants.

Full article

(This article belongs to the Special Issue Child–Computer Interaction and Multimodal Child Behavior Analysis)

►▼

Show Figures

Figure 1

Open AccessArticle

Federated Learning for Clinical Event Classification Using Vital Signs Data

by

and

Multimodal Technol. Interact. 2023, 7(7), 67; https://doi.org/10.3390/mti7070067 - 29 Jun 2023

Abstract

►▼

Show Figures

Accurate and timely diagnosis is a pillar of effective healthcare. However, the challenge lies in gathering extensive training data while maintaining patient privacy. This study introduces a novel approach using federated learning (FL) and a cross-device multimodal model for clinical event classification based

[...] Read more.

Accurate and timely diagnosis is a pillar of effective healthcare. However, the challenge lies in gathering extensive training data while maintaining patient privacy. This study introduces a novel approach using federated learning (FL) and a cross-device multimodal model for clinical event classification based on vital signs data. Our architecture employs FL to train several machine learning models including random forest, AdaBoost, and SGD ensemble models on vital signs data. The data were sourced from a diverse clientele at a Boston hospital (MIMIC-IV dataset). The FL structure trains directly on each client’s device, ensuring no transfer of sensitive data and preserving patient privacy. The study demonstrates that FL offers a powerful tool for privacy-preserving clinical event classification, with our approach achieving an impressive accuracy of 98.9%. These findings highlight the significant potential of FL and cross-device ensemble technology in healthcare applications, especially in the context of handling large volumes of sensitive patient data.

Full article

Figure 1

Open AccessReview

A Dynamic Interactive Approach to Music Listening: The Role of Entrainment, Attunement and Resonance

Multimodal Technol. Interact. 2023, 7(7), 66; https://doi.org/10.3390/mti7070066 - 28 Jun 2023

Abstract

►▼

Show Figures

This paper takes a dynamic interactive stance to music listening. It revolves around the focal concept of entrainment as an operational tool for the description of fine-grained dynamics between the music as an entraining stimulus and the listener as an entrained subject. Listeners,

[...] Read more.

This paper takes a dynamic interactive stance to music listening. It revolves around the focal concept of entrainment as an operational tool for the description of fine-grained dynamics between the music as an entraining stimulus and the listener as an entrained subject. Listeners, in this view, can be “entrained” by the sounds at several levels of processing, dependent on the degree of attunement and alignment of their attention. The concept of entrainment, however, is somewhat ill-defined, with distinct conceptual labels, such as external vs. mutual, symmetrical vs. asymmetrical, metrical vs. non-metrical, within-persons and between-person, and physical vs. cognitive entrainment. The boundaries between entrainment, resonance, and synchronization are also not always very clear. There is, as such, a need for a broadened approach to entrainment, taking as a starting point the concept of oscillators that interact with each other in a continuous and ongoing way, and relying on the theoretical framework of interaction dynamics and the concept of adaptation. Entrainment, in this broadened view, is seen as an adaptive process that accommodates to the music under the influence of both the attentional direction of the listener and the configurations of the sounding stimuli.

Full article

Figure 1

Open AccessArticle

Towards Universal Industrial Augmented Reality: Implementing a Modular IAR System to Support Assembly Processes

Multimodal Technol. Interact. 2023, 7(7), 65; https://doi.org/10.3390/mti7070065 - 27 Jun 2023

Abstract

►▼

Show Figures

While Industrial Augmented Reality (IAR) has many applications across the whole product lifecycle, most IAR applications today are custom-built for specific use-cases in practice. This contribution builds upon a scoping literature review of IAR data representations to present a modern, modular IAR architecture.

[...] Read more.

While Industrial Augmented Reality (IAR) has many applications across the whole product lifecycle, most IAR applications today are custom-built for specific use-cases in practice. This contribution builds upon a scoping literature review of IAR data representations to present a modern, modular IAR architecture. The individual modules of the presented approach are either responsible for user interface and user interaction or for data processing. They are use-case neutral and independent of each other, while communicating through a strictly separated application layer. To demonstrate the architecture, this contribution presents an assembly process that is supported once with a pick-to-light system and once using in situ projections. Both are implemented on top of the novel architecture, allowing most of the work on the individual models to be reused. This IAR architecture, based on clearly separated modules with defined interfaces, particularly allows small companies with limited personnel resources to adapt IAR for their specific use-cases more easily than developing single-use applications from scratch.

Full article

Figure 1

Open AccessReview

From Earlier Exploration to Advanced Applications: Bibliometric and Systematic Review of Augmented Reality in the Tourism Industry (2002–2022)

Multimodal Technol. Interact. 2023, 7(7), 64; https://doi.org/10.3390/mti7070064 - 26 Jun 2023

Abstract

►▼

Show Figures

Augmented reality has emerged as a transformative technology, with the potential to revolutionize the tourism industry. Nonetheless, there is a scarcity of studies tracing the progression of AR and its application in tourism, from early exploration to recent advancements. This study aims to

[...] Read more.

Augmented reality has emerged as a transformative technology, with the potential to revolutionize the tourism industry. Nonetheless, there is a scarcity of studies tracing the progression of AR and its application in tourism, from early exploration to recent advancements. This study aims to provide a comprehensive overview of the evolution, contexts, and design elements of AR in tourism over the period (2002–2022), offering insights for further progress in this domain. Employing a dual-method approach, a bibliometric analysis was conducted on 861 articles collected from the Scopus and Web of Science databases, to investigate the evolution of AR research over time and across countries, and to identify the main contexts of the utilization of AR in tourism. In the second part of our study, a systematic content analysis was conducted, focusing on a subset of 57 selected studies that specifically employed AR systems in various tourism situations. Through this analysis, the most commonly utilized AR design components, such as tracking systems, AR devices, tourism settings, and virtual content were summarized. Furthermore, we explored how these components were integrated to enhance the overall tourism experience. The findings reveal a growing trend in research production, led by Europe and Asia. Key contexts of AR applications in tourism encompass cultural heritage, mobile AR, and smart tourism, with emerging topics such as artificial intelligence (AI), big data, and COVID-19. Frequently used AR design components comprise mobile devices, marker-less tracking systems, outdoor environments, and visual overlays. Future research could involve optimizing AR experiences for users with disabilities, supporting multicultural experiences, integrating AI with big data, fostering sustainability, and remote virtual tourism. This study contributes to the ongoing discourse on the role of AR in shaping the future of tourism in the post COVID-19 era, by providing valuable insights for researchers, practitioners, and policymakers in the tourism industry.

Full article

Figure 1

Open AccessArticle

Mid-Air Gestural Interaction with a Large Fogscreen

by

, , , , , , , and

Multimodal Technol. Interact. 2023, 7(7), 63; https://doi.org/10.3390/mti7070063 - 24 Jun 2023

Abstract

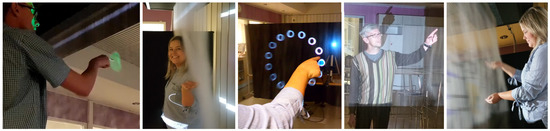

Projected walk-through fogscreens have been created, but there is little research on the evaluation of the interaction performance with fogscreens. The present study investigated mid-air hand gestures for interaction with a large fogscreen. Participants (N = 20) selected objects from a fogscreen

[...] Read more.

Projected walk-through fogscreens have been created, but there is little research on the evaluation of the interaction performance with fogscreens. The present study investigated mid-air hand gestures for interaction with a large fogscreen. Participants (N = 20) selected objects from a fogscreen using tapping and dwell-based gestural techniques, with and without vibrotactile/haptic feedback. In terms of Fitts’ law, the throughput was about 1.4 bps to 2.6 bps, suggesting that gestural interaction with a large fogscreen is a suitable and effective input method. Our results also suggest that tapping without haptic feedback has good performance and potential for interaction with a fogscreen, and that tactile feedback is not necessary for effective mid-air interaction. These findings have implications for the design of gestural interfaces suitable for interaction with fogscreens.

Full article

(This article belongs to the Special Issue Feature Papers in Multimodal Technologies and Interaction—Edition 2023)

►▼

Show Figures

Figure 1

Open AccessArticle

Are Drivers Allowed to Sleep? Sleep Inertia Effects Drivers’ Performance after Different Sleep Durations in Automated Driving

by

, , , , and

Multimodal Technol. Interact. 2023, 7(6), 62; https://doi.org/10.3390/mti7060062 - 16 Jun 2023

Abstract

Higher levels of automated driving may offer the possibility to sleep in the driver’s seat in the car, and it is foreseeable that drivers will voluntarily or involuntarily fall asleep when they do not need to drive. Post-sleep performance impairments due to sleep

[...] Read more.

Higher levels of automated driving may offer the possibility to sleep in the driver’s seat in the car, and it is foreseeable that drivers will voluntarily or involuntarily fall asleep when they do not need to drive. Post-sleep performance impairments due to sleep inertia, a brief period of impaired cognitive performance after waking up, is a potential safety issue when drivers need to take over and drive manually. The present study assessed whether sleep inertia has an effect on driving and cognitive performance after different sleep durations. A driving simulator study with n = 13 participants was conducted. Driving and cognitive performance were analyzed after waking up from a 10–20 min sleep, a 30–60 min sleep, and after resting without sleep. The study’s results indicate that a short sleep duration does not reliably prevent sleep inertia. After the 10–20 min sleep, cognitive performance upon waking up was decreased, but the sleep inertia impairment faded within 15 min. Although the driving parameters showed no significant difference between the conditions, participants subjectively felt more tired after both sleep durations compared to resting. The small sample size of 13 participants, tested in a within-design, may have prevented medium and small effects from becoming significant. In our study, take-over was offered without time pressure, and take-over times ranged from 3.15 min to 4.09 min after the alarm bell, with a mean value of 3.56 min in both sleeping conditions. The results suggest that daytime naps without previous sleep deprivation result in mild and short-term impairments. Further research is recommended to understand the severity of impairments caused by different intensities of sleep inertia.

Full article

(This article belongs to the Special Issue Cooperative Intelligence in Automated Driving- 2nd Edition)

►▼

Show Figures

Figure 1

Open AccessArticle

Social Cohesion in Interactive Digital Heritage Experiences

Multimodal Technol. Interact. 2023, 7(6), 61; https://doi.org/10.3390/mti7060061 - 15 Jun 2023

Abstract

►▼

Show Figures

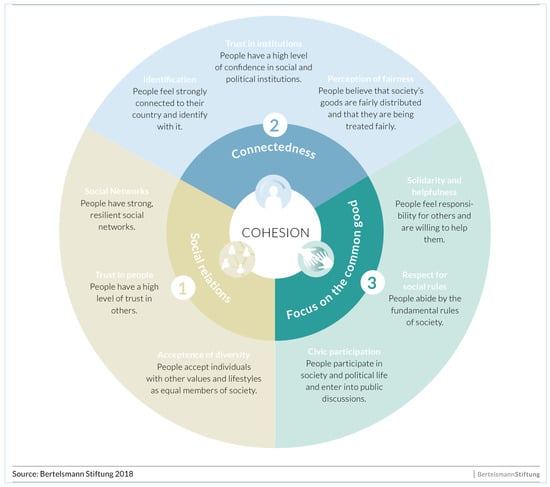

Presently, social cohesion (SC) is a priority at different levels. Cultural heritage is an ideal context to promote SC through interactive digital technologies designed to engage groups of visitors. The purpose of the present study is to identify how to design digital heritage

[...] Read more.

Presently, social cohesion (SC) is a priority at different levels. Cultural heritage is an ideal context to promote SC through interactive digital technologies designed to engage groups of visitors. The purpose of the present study is to identify how to design digital heritage applications for SC and how to measure it. The results are based on the design of a cultural probe kit used to identify the design elements on top of which a collaborative and hybrid prototype, the Brancacci POV, was developed. Here, we analysed the results of this prototype, which included 107 visitors with respective groups of 5 participants and guided by an expert. From this analysis, the possibility of strengthening SC when collaborative tasks are included emerged. Additionally, it appeared to be possible to shorten the distance between citizens and cultural institutions if “mediated dialogue” approaches were adopted and if focus, motivation, trust and “in-group” perception of inclusion emerge when digital heritage experiences were set in intimate and quiet environments.

Full article

Figure 1

Open AccessArticle

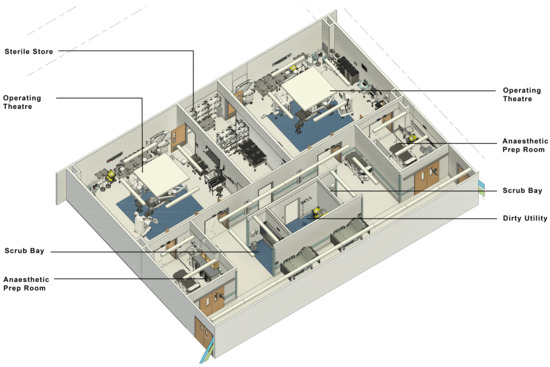

On the Effectiveness of Using Virtual Reality to View BIM Metadata in Architectural Design Reviews for Healthcare

Multimodal Technol. Interact. 2023, 7(6), 60; https://doi.org/10.3390/mti7060060 - 07 Jun 2023

Abstract

This article reports on a study that assessed whether Virtual Reality (VR) can be used to display Building Information Modelling (BIM) metadata alongside spatial data in a virtual environment, and by doing so determine if it increases the effectiveness of the design review

[...] Read more.

This article reports on a study that assessed whether Virtual Reality (VR) can be used to display Building Information Modelling (BIM) metadata alongside spatial data in a virtual environment, and by doing so determine if it increases the effectiveness of the design review by improving participants’ understanding of the design. Previous research has illustrated the potential for VR to enhance design reviews, especially the ability to convey spatial information, but there has been limited research into how VR can convey additional BIM metadata. A user study with 17 healthcare professionals assessed participants’ performances and preferences for completing design reviews in VR or using a traditional design review system of PDF drawings and a 3D model. The VR condition had a higher task completion rate, a higher SUS score and generally faster completion times. VR increases the effectiveness of a design review conducted by healthcare professionals.

Full article

(This article belongs to the Special Issue Multimodal User Interfaces and Experiences: Challenges, Applications, and Perspectives)

►▼

Show Figures

Figure 1

Open AccessArticle

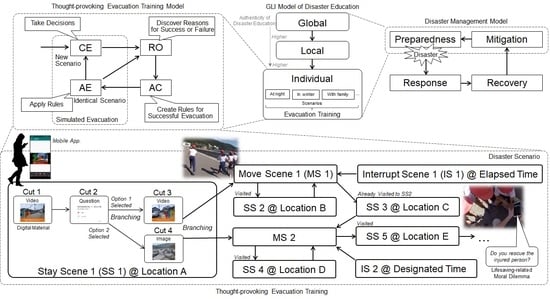

Location-Based Game for Thought-Provoking Evacuation Training

Multimodal Technol. Interact. 2023, 7(6), 59; https://doi.org/10.3390/mti7060059 - 07 Jun 2023

Abstract

Participation in evacuation training can aid survival in the event of an unpredictable disaster, such as an earthquake. However, conventional evacuation training is not well designed for provoking critical thinking in participants regarding the processes involved in a successful evacuation. To realize thought-provoking

[...] Read more.

Participation in evacuation training can aid survival in the event of an unpredictable disaster, such as an earthquake. However, conventional evacuation training is not well designed for provoking critical thinking in participants regarding the processes involved in a successful evacuation. To realize thought-provoking evacuation training, we developed a location-based game that presents digital materials that express disaster situations corresponding to locations or times preset in a scenario and providing scenario-based multi-ending as the game element. The developed game motivates participants to take decisions by providing high situational and audiovisual realism. In addition, the game encourages the participants to think objectively about the evacuation process by working together with a reflection-support system. We practiced thought-provoking evacuation training with fifth-grade students, focusing on tsunami evacuation and lifesaving-related moral dilemmas. In this practice, we observed that the participants took decisions as if they were dealing with actual disaster situations and objectively thought about the evacuation process by reflecting on their decisions. Meanwhile, we found that lifesaving-related moral dilemmas are difficult to address in evacuation training.

Full article

(This article belongs to the Special Issue Feature Papers in Multimodal Technologies and Interaction—Edition 2023)

►▼

Show Figures

Graphical abstract

Highly Accessed Articles

Latest Books

E-Mail Alert

News

31 July 2023

MDPI’s 2022 Best PhD Thesis Awards in Computer Science and Mathematics—Winners Announced

MDPI’s 2022 Best PhD Thesis Awards in Computer Science and Mathematics—Winners Announced

31 July 2023

MDPI’s 2022 Young Investigator Awards in Computer Science and Mathematics—Winners Announced

MDPI’s 2022 Young Investigator Awards in Computer Science and Mathematics—Winners Announced

Topics

Topic in

AI, Algorithms, Information, MTI, Sensors

Lightweight Deep Neural Networks for Video Analytics

Topic Editors: Amin Ullah, Tanveer Hussain, Mohammad Farhad BulbulDeadline: 31 December 2023

Topic in

Entropy, Future Internet, Algorithms, Computation, MAKE, MTI

Interactive Artificial Intelligence and Man-Machine Communication

Topic Editors: Christos Troussas, Cleo Sgouropoulou, Akrivi Krouska, Ioannis Voyiatzis, Athanasios VoulodimosDeadline: 20 February 2024

Topic in

Informatics, Information, Mathematics, MTI, Symmetry

Youth Engagement in Social Media in the Post COVID-19 Era

Topic Editors: Naseer Abbas Khan, Shahid Kalim Khan, Abdul QayyumDeadline: 30 September 2024

Conferences

Special Issues

Special Issue in

MTI

3D User Interfaces and Virtual Reality

Guest Editors: Arun K. Kulshreshth, Kevin PfeilDeadline: 30 September 2023

Special Issue in

MTI

Designing EdTech and Virtual Learning Environments

Guest Editors: Stephan Schlögl, Deepak Khazanchi, Peter Mirski, Teresa Spieß, Reinhard Bernsteiner, Christian Ploder, Pascal Schöttle, Matthias JanetschekDeadline: 15 October 2023

Special Issue in

MTI

Cooperative Intelligence in Automated Driving- 2nd Edition

Guest Editors: Andreas Riener, Myounghoon Jeon (Philart), Ronald SchroeterDeadline: 20 December 2023

Special Issue in

MTI

Multimodal User Interfaces and Experiences: Challenges, Applications, and Perspectives

Guest Editors: Wei Liu, Jan Auernhammer, Takumi Ohashi, Di Zhu, Kuo-Hsiang ChenDeadline: 31 December 2023