-

Resampling Imbalanced Network Intrusion Datasets to Identify Rare Attacks

Resampling Imbalanced Network Intrusion Datasets to Identify Rare Attacks -

A Multiverse Graph to Help Scientific Reasoning from Web Usage: Interpretable Patterns of Assessor Shifts in GRAPHYP

A Multiverse Graph to Help Scientific Reasoning from Web Usage: Interpretable Patterns of Assessor Shifts in GRAPHYP -

From NFT 1.0 to NFT 2.0: A Review of the Evolution of Non-Fungible Tokens

From NFT 1.0 to NFT 2.0: A Review of the Evolution of Non-Fungible Tokens -

Performance Evaluation of a Lane Correction Module Stress Test: A Field Test of Tesla Model 3

Performance Evaluation of a Lane Correction Module Stress Test: A Field Test of Tesla Model 3 -

A New AI-Based Semantic Cyber Intelligence Agent

A New AI-Based Semantic Cyber Intelligence Agent

Journal Description

Future Internet

Future Internet

is a scholarly, peer-reviewed, open access journal on Internet technologies and the information society, published monthly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), Ei Compendex, dblp, Inspec, and other databases.

- Journal Rank: CiteScore - Q1 (Computer Networks and Communications)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 13.6 days after submission; acceptance to publication is undertaken in 2.7 days (median values for papers published in this journal in the first half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Impact Factor:

3.4 (2022);

5-Year Impact Factor:

3.4 (2022)

Latest Articles

Efficient Integration of Heterogeneous Mobility-Pollution Big Data for Joint Analytics at Scale with QoS Guarantees

Future Internet 2023, 15(8), 263; https://doi.org/10.3390/fi15080263 - 07 Aug 2023

Abstract

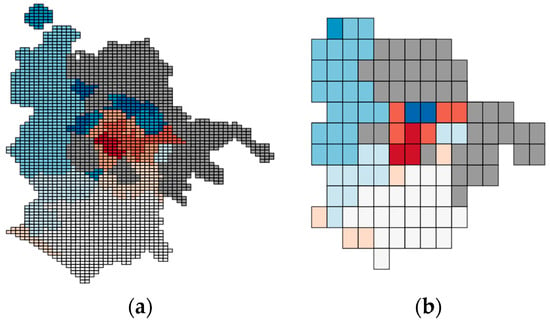

Numerous real-life smart city application scenarios require joint analytics on unified views of georeferenced mobility data with environment contextual data including pollution and meteorological data. particularly, future urban planning requires restricting vehicle access to specific areas of a city to reduce the adverse

[...] Read more.

Numerous real-life smart city application scenarios require joint analytics on unified views of georeferenced mobility data with environment contextual data including pollution and meteorological data. particularly, future urban planning requires restricting vehicle access to specific areas of a city to reduce the adverse effect of their engine combustion emissions on the health of dwellers and cyclers. Current editions of big spatial data management systems do not come with over-the-counter support for similar scenarios. To close this gap, in this paper, we show the design and prototyping of a novel system we term as EMDI for the enrichment of human and vehicle mobility data with pollution information, thus enabling integrated analytics on a unified view. Our system supports a variety of queries including single geo-statistics, such as ‘mean’, and Top-N queries, in addition to geo-visualization on the combined view. We have tested our system with real big georeferenced mobility and environmental data coming from the city of Bologna in Italy. Our testing results show that our system can be efficiently utilized for advanced combined pollution-mobility analytics at a scale with QoS guarantees. Specifically, a reduction in latency that equals roughly 65%, on average, is obtained by using EMDI as opposed to the plain baseline, we also obtain statistically significant accuracy results for Top-N queries ranging roughly from 0.84 to 1 for both Spearman and Pearson correlation coefficients depending on the geo-encoding configurations, in addition to significant single geo-statistics accuracy values expressed using Mean Absolute Percentage Error on the range from 0.00392 to 0.000195.

Full article

(This article belongs to the Special Issue State-of-the-Art Future Internet Technology in Italy 2022–2023)

►

Show Figures

Open AccessArticle

Applying Detection Leakage on Hybrid Cryptography to Secure Transaction Information in E-Commerce Apps

Future Internet 2023, 15(8), 262; https://doi.org/10.3390/fi15080262 - 01 Aug 2023

Abstract

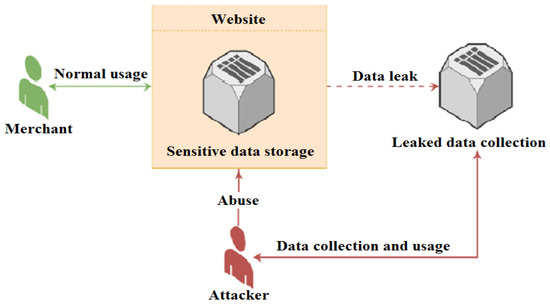

Technology advancements have driven a boost in electronic commerce use in the present day due to an increase in demand processes, regardless of whether goods, products, services, or payments are being bought or sold. Various goods are purchased and sold online by merchants

[...] Read more.

Technology advancements have driven a boost in electronic commerce use in the present day due to an increase in demand processes, regardless of whether goods, products, services, or payments are being bought or sold. Various goods are purchased and sold online by merchants (

(This article belongs to the Special Issue Information and Future Internet Security, Trust and Privacy II)

►▼

Show Figures

Figure 1

Open AccessArticle

Towards Efficient Resource Allocation for Federated Learning in Virtualized Managed Environments

Future Internet 2023, 15(8), 261; https://doi.org/10.3390/fi15080261 - 31 Jul 2023

Abstract

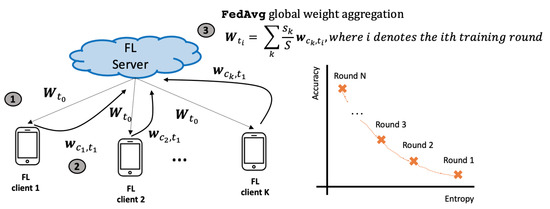

Federated learning (FL) is a transformative approach to Machine Learning that enables the training of a shared model without transferring private data to a central location. This decentralized training paradigm has found particular applicability in edge computing, where IoT devices and edge nodes

[...] Read more.

Federated learning (FL) is a transformative approach to Machine Learning that enables the training of a shared model without transferring private data to a central location. This decentralized training paradigm has found particular applicability in edge computing, where IoT devices and edge nodes often possess limited computational power, network bandwidth, and energy resources. While various techniques have been developed to optimize the FL training process, an important question remains unanswered: how should resources be allocated in the training workflow? To address this question, it is crucial to understand the nature of these resources. In physical environments, the allocation is typically performed at the node level, with the entire node dedicated to executing a single workload. In contrast, virtualized environments allow for the dynamic partitioning of a node into containerized units that can adapt to changing workloads. Consequently, the new question that arises is: how can a physical node be partitioned into virtual resources to maximize the efficiency of the FL process? To answer this, we investigate various resource allocation methods that consider factors such as computational and network capabilities, the complexity of datasets, as well as the specific characteristics of the FL workflow and ML backend. We explore two scenarios: (i) running FL over a finite number of testbed nodes and (ii) hosting multiple parallel FL workflows on the same set of testbed nodes. Our findings reveal that the default configurations of state-of-the-art cloud orchestrators are sub-optimal when orchestrating FL workflows. Additionally, we demonstrate that different libraries and ML models exhibit diverse computational footprints. Building upon these insights, we discuss methods to mitigate computational interferences and enhance the overall performance of the FL pipeline execution.

Full article

(This article belongs to the Special Issue Edge-Cloud Computing and Federated-Split Learning in the Internet of Things)

►▼

Show Figures

Figure 1

Open AccessReview

The Power of Generative AI: A Review of Requirements, Models, Input–Output Formats, Evaluation Metrics, and Challenges

Future Internet 2023, 15(8), 260; https://doi.org/10.3390/fi15080260 - 31 Jul 2023

Abstract

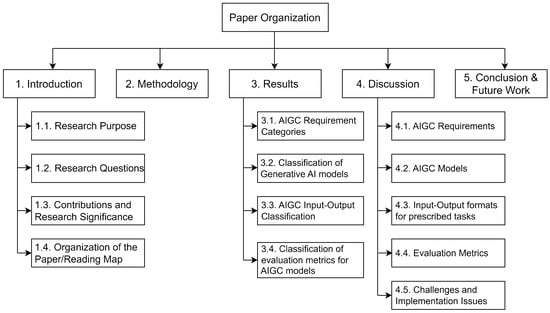

Generative artificial intelligence (AI) has emerged as a powerful technology with numerous applications in various domains. There is a need to identify the requirements and evaluation metrics for generative AI models designed for specific tasks. The purpose of the research aims to investigate

[...] Read more.

Generative artificial intelligence (AI) has emerged as a powerful technology with numerous applications in various domains. There is a need to identify the requirements and evaluation metrics for generative AI models designed for specific tasks. The purpose of the research aims to investigate the fundamental aspects of generative AI systems, including their requirements, models, input–output formats, and evaluation metrics. The study addresses key research questions and presents comprehensive insights to guide researchers, developers, and practitioners in the field. Firstly, the requirements necessary for implementing generative AI systems are examined and categorized into three distinct categories: hardware, software, and user experience. Furthermore, the study explores the different types of generative AI models described in the literature by presenting a taxonomy based on architectural characteristics, such as variational autoencoders (VAEs), generative adversarial networks (GANs), diffusion models, transformers, language models, normalizing flow models, and hybrid models. A comprehensive classification of input and output formats used in generative AI systems is also provided. Moreover, the research proposes a classification system based on output types and discusses commonly used evaluation metrics in generative AI. The findings contribute to advancements in the field, enabling researchers, developers, and practitioners to effectively implement and evaluate generative AI models for various applications. The significance of the research lies in understanding that generative AI system requirements are crucial for effective planning, design, and optimal performance. A taxonomy of models aids in selecting suitable options and driving advancements. Classifying input–output formats enables leveraging diverse formats for customized systems, while evaluation metrics establish standardized methods to assess model quality and performance.

Full article

(This article belongs to the Special Issue State-of-the-Art Future Internet Technology in USA 2022–2023)

►▼

Show Figures

Figure 1

Open AccessArticle

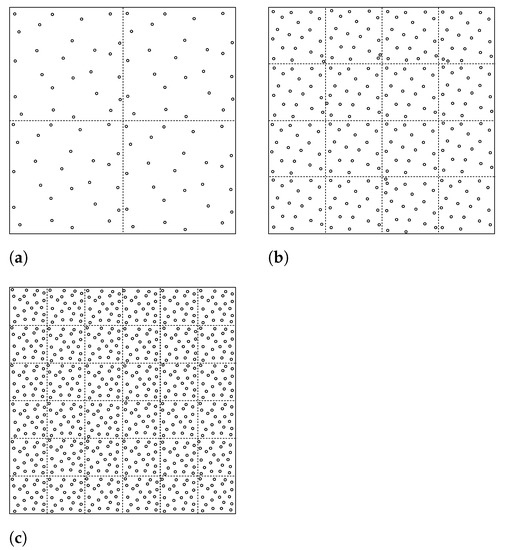

Virtual Grid-Based Routing for Query-Driven Wireless Sensor Networks

by

, , , , and

Future Internet 2023, 15(8), 259; https://doi.org/10.3390/fi15080259 - 30 Jul 2023

Abstract

In the context of query-driven wireless sensor networks (WSNs), a unique scenario arises where sensor nodes are solicited by a base station, also known as a sink, based on specific areas of interest (AoIs). Upon receiving a query, designated sensor nodes are tasked

[...] Read more.

In the context of query-driven wireless sensor networks (WSNs), a unique scenario arises where sensor nodes are solicited by a base station, also known as a sink, based on specific areas of interest (AoIs). Upon receiving a query, designated sensor nodes are tasked with transmitting their data to the sink. However, the routing of these queries from the sink to the sensor nodes becomes intricate when the sink is mobile. The sink’s movement after issuing a query can potentially disrupt the performance of data delivery. To address these challenges, we have proposed an innovative approach called Query-driven Virtual Grid-based Routing Protocol (VGRQ), aiming to enhance energy efficiency and reduce data delivery delays. In VGRQ, we construct a grid consisting of square-shaped virtual cells, with the number of cells matching the count of sensor nodes. Each cell designates a specific node as the cell header (CH), and these CHs establish connections with each other to form a chain-like structure. This chain serves two primary purposes: sharing the mobile sink’s location information and facilitating the transmission of queries to the AoI as well as data to the sink. By employing the VGRQ approach, we seek to optimize the performance of query-driven WSNs. It enhances energy utilization and reduces data delivery delays. Additionally, VGRQ results in ≈10% and ≈27% improvement in energy consumption when compared with QRRP and QDVGDD, respectively.

Full article

(This article belongs to the Special Issue Applications of Wireless Sensor Networks and Internet of Things)

►▼

Show Figures

Figure 1

Open AccessArticle

An Optimal Authentication Scheme through Dual Signature for the Internet of Medical Things

by

, , , , , and

Future Internet 2023, 15(8), 258; https://doi.org/10.3390/fi15080258 - 30 Jul 2023

Abstract

The Internet of Medical Things (IoMT) overcomes the flaws in the traditional healthcare system by enabling remote administration, more effective use of resources, and the mobility of medical devices to fulfil the patient’s needs. The IoMT makes it simple to review the patient’s

[...] Read more.

The Internet of Medical Things (IoMT) overcomes the flaws in the traditional healthcare system by enabling remote administration, more effective use of resources, and the mobility of medical devices to fulfil the patient’s needs. The IoMT makes it simple to review the patient’s cloud-based medical history in addition to allowing the doctor to keep a close eye on the patient’s condition. However, any communication must be secure and dependable due to the private nature of patient medical records. In this paper, we proposed an authentication method for the IoMT based on hyperelliptic curves and featuring dual signatures. The decreased key size of hyperelliptic curves makes the proposed scheme efficient. Furthermore, security validation analysis is performed with the help of the formal verification tool called Scyther, which shows that the proposed scheme is secure against several types of attacks. A comparison of the proposed scheme’s computational and communication expenses with those of existing schemes reveals its efficiency.

Full article

(This article belongs to the Special Issue QoS in Wireless Sensor Network for IoT Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

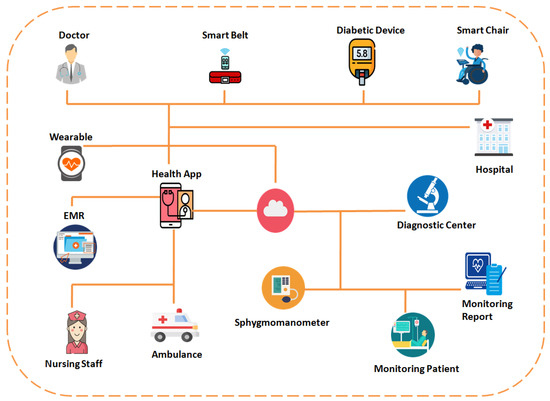

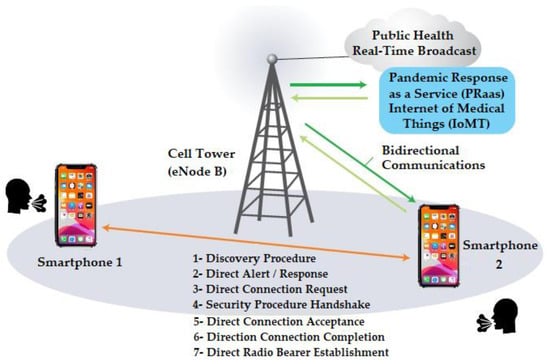

The mPOC Framework: An Autonomous Outbreak Prediction and Monitoring Platform Based on Wearable IoMT Approach

by

Future Internet 2023, 15(8), 257; https://doi.org/10.3390/fi15080257 - 30 Jul 2023

Abstract

This paper presents the mHealth Predictive Outbreak for COVID-19 (mPOC) framework, an autonomous platform based on wearable Internet of Medical Things (IoMT) devices for outbreak prediction and monitoring. It utilizes real-time physiological and environmental data to assess user risk. The framework incorporates the

[...] Read more.

This paper presents the mHealth Predictive Outbreak for COVID-19 (mPOC) framework, an autonomous platform based on wearable Internet of Medical Things (IoMT) devices for outbreak prediction and monitoring. It utilizes real-time physiological and environmental data to assess user risk. The framework incorporates the analysis of psychological and user-centric data, adopting a combination of top-down and bottom-up approaches. The mPOC mechanism utilizes the bidirectional Mobile Health (mHealth) Disaster Recovery System (mDRS) and employs an intelligent algorithm to calculate the Predictive Exposure Index (PEI) and Deterioration Risk Index (DRI). These indices trigger warnings to users based on adaptive threshold criteria and provide updates to the Outbreak Tracking Center (OTC). This paper provides a comprehensive description and analysis of the framework’s mechanisms and algorithms, complemented by the performance accuracy evaluation. By leveraging wearable IoMT devices, the mPOC framework showcases its potential in disease prevention and control during pandemics, offering timely alerts and vital information to healthcare professionals and individuals to mitigate outbreaks’ impact.

Full article

(This article belongs to the Special Issue The Future Internet of Medical Things II)

►▼

Show Figures

Figure 1

Open AccessReview

Features and Scope of Regulatory Technologies: Challenges and Opportunities with Industrial Internet of Things

Future Internet 2023, 15(8), 256; https://doi.org/10.3390/fi15080256 - 30 Jul 2023

Abstract

Regulatory Technology (RegTech) is an emerging set of computing and network-based information systems and practices intended to enhance and improve regulatory compliance processes. Such technologies rely on collecting exclusive information from the environment and humans through automated Internet of Things (IoT) sensors and

[...] Read more.

Regulatory Technology (RegTech) is an emerging set of computing and network-based information systems and practices intended to enhance and improve regulatory compliance processes. Such technologies rely on collecting exclusive information from the environment and humans through automated Internet of Things (IoT) sensors and self-reported data. The key enablers of RegTech are the increased capabilities and reduced cost of IoT and Artificial Intelligence (AI) technologies. This article focuses on a survey of RegTech, highlighting the recent developments in various sectors. This work identifies the characteristics of existing implementations of RegTech applications in the financial industry. It examines the critical features that non-financial industries such as agriculture must address when using such technologies. We investigate the suitability of existing technologies applied in financial sectors to other industries and the potential gaps to be filled between them in terms of designing information systems for regulatory frameworks. This includes identifying specific operational parameters that are key differences between the financial and non-financial sectors that can be supported with IoT and AI technologies. These can be used by both producers of goods and services and regulators who need an affordable and efficient supervision method for managing relevant organizations.

Full article

(This article belongs to the Section Techno-Social Smart Systems)

►▼

Show Figures

Figure 1

Open AccessReview

A Review of ARIMA vs. Machine Learning Approaches for Time Series Forecasting in Data Driven Networks

Future Internet 2023, 15(8), 255; https://doi.org/10.3390/fi15080255 - 30 Jul 2023

Abstract

In the broad scientific field of time series forecasting, the ARIMA models and their variants have been widely applied for half a century now due to their mathematical simplicity and flexibility in application. However, with the recent advances in the development and efficient

[...] Read more.

In the broad scientific field of time series forecasting, the ARIMA models and their variants have been widely applied for half a century now due to their mathematical simplicity and flexibility in application. However, with the recent advances in the development and efficient deployment of artificial intelligence models and techniques, the view is rapidly changing, with a shift towards machine and deep learning approaches becoming apparent, even without a complete evaluation of the superiority of the new approach over the classic statistical algorithms. Our work constitutes an extensive review of the published scientific literature regarding the comparison of ARIMA and machine learning algorithms applied to time series forecasting problems, as well as the combination of these two approaches in hybrid statistical-AI models in a wide variety of data applications (finance, health, weather, utilities, and network traffic prediction). Our review has shown that the AI algorithms display better prediction performance in most applications, with a few notable exceptions analyzed in our Discussion and Conclusions sections, while the hybrid statistical-AI models steadily outperform their individual parts, utilizing the best algorithmic features of both worlds.

Full article

(This article belongs to the Special Issue Smart Data and Systems for the Internet of Things)

►▼

Show Figures

Figure 1

Open AccessReview

Task Allocation Methods and Optimization Techniques in Edge Computing: A Systematic Review of the Literature

by

, , , , and

Future Internet 2023, 15(8), 254; https://doi.org/10.3390/fi15080254 - 28 Jul 2023

Abstract

►▼

Show Figures

Task allocation in edge computing refers to the process of distributing tasks among the various nodes in an edge computing network. The main challenges in task allocation include determining the optimal location for each task based on the requirements such as processing power,

[...] Read more.

Task allocation in edge computing refers to the process of distributing tasks among the various nodes in an edge computing network. The main challenges in task allocation include determining the optimal location for each task based on the requirements such as processing power, storage, and network bandwidth, and adapting to the dynamic nature of the network. Different approaches for task allocation include centralized, decentralized, hybrid, and machine learning algorithms. Each approach has its strengths and weaknesses and the choice of approach will depend on the specific requirements of the application. In more detail, the selection of the most optimal task allocation methods depends on the edge computing architecture and configuration type, like mobile edge computing (MEC), cloud-edge, fog computing, peer-to-peer edge computing, etc. Thus, task allocation in edge computing is a complex, diverse, and challenging problem that requires a balance of trade-offs between multiple conflicting objectives such as energy efficiency, data privacy, security, latency, and quality of service (QoS). Recently, an increased number of research studies have emerged regarding the performance evaluation and optimization of task allocation on edge devices. While several survey articles have described the current state-of-the-art task allocation methods, this work focuses on comparing and contrasting different task allocation methods, optimization algorithms, as well as the network types that are most frequently used in edge computing systems.

Full article

Figure 1

Open AccessArticle

A Comparative Analysis of High Availability for Linux Container Infrastructures

Future Internet 2023, 15(8), 253; https://doi.org/10.3390/fi15080253 - 28 Jul 2023

Abstract

In the current era of prevailing information technology, the requirement for high availability and reliability of various types of services is critical. This paper focusses on the comparison and analysis of different high-availability solutions for Linux container environments. The objective was to identify

[...] Read more.

In the current era of prevailing information technology, the requirement for high availability and reliability of various types of services is critical. This paper focusses on the comparison and analysis of different high-availability solutions for Linux container environments. The objective was to identify the strengths and weaknesses of each solution and to determine the optimal container approach for common use cases. Through a series of structured experiments, basic performance metrics were collected, including average service recovery time, average transfer rate, and total number of failed calls. The container platforms tested included Docker, Kubernetes, and Proxmox. On the basis of a comprehensive evaluation, it can be concluded that Docker with Docker Swarm is generally the most effective high-availability solution for commonly used Linux containers. Nevertheless, there are specific scenarios in which Proxmox stands out, for example, when fast data transfer is a priority or when load balancing is not a critical requirement.

Full article

(This article belongs to the Special Issue Cloud Computing and High Performance Computing (HPC) Advances for Next Generation Internet)

►▼

Show Figures

Figure 1

Open AccessArticle

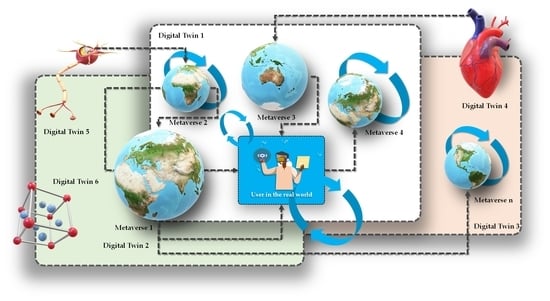

The Meta-Metaverse: Ideation and Future Directions

Future Internet 2023, 15(8), 252; https://doi.org/10.3390/fi15080252 - 27 Jul 2023

Abstract

In the era of digitalization and artificial intelligence (AI), the utilization of Metaverse technology has become increasingly crucial. As the world becomes more digitized, there is a pressing need to effectively transfer real-world assets into the digital realm and establish meaningful relationships between

[...] Read more.

In the era of digitalization and artificial intelligence (AI), the utilization of Metaverse technology has become increasingly crucial. As the world becomes more digitized, there is a pressing need to effectively transfer real-world assets into the digital realm and establish meaningful relationships between them. However, existing approaches have shown significant limitations in achieving this goal comprehensively. To address this, this research introduces an innovative methodology called the Meta-Metaverse, which aims to enhance the immersive experience and create realistic digital twins across various domains such as biology, genetics, economy, medicine, environment, gaming, digital twins, Internet of Things, artificial intelligence, machine learning, psychology, supply chain, social networking, smart manufacturing, and politics. The multi-layered structure of Metaverse platforms and digital twins allows for greater flexibility and scalability, offering valuable insights into the potential impact of advancing science, technology, and the internet. This article presents a detailed description of the proposed methodology and its applications, highlighting its potential to transform scientific research and inspire groundbreaking ideas in science, medicine, and technology.

Full article

(This article belongs to the Special Issue Virtual Reality and Metaverse: Impact on the Digital Transformation of Society)

►▼

Show Figures

Graphical abstract

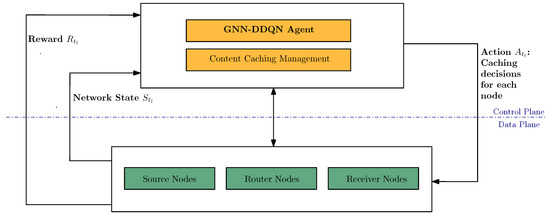

Open AccessArticle

Intelligent Caching with Graph Neural Network-Based Deep Reinforcement Learning on SDN-Based ICN

Future Internet 2023, 15(8), 251; https://doi.org/10.3390/fi15080251 - 26 Jul 2023

Abstract

Information-centric networking (ICN) has gained significant attention due to its in-network caching and named-based routing capabilities. Caching plays a crucial role in managing the increasing network traffic and improving the content delivery efficiency. However, caching faces challenges as routers have limited cache space

[...] Read more.

Information-centric networking (ICN) has gained significant attention due to its in-network caching and named-based routing capabilities. Caching plays a crucial role in managing the increasing network traffic and improving the content delivery efficiency. However, caching faces challenges as routers have limited cache space while the network hosts tens of thousands of items. This paper focuses on enhancing the cache performance by maximizing the cache hit ratio in the context of software-defined networking–ICN (SDN-ICN). We propose a statistical model that generates users’ content preferences, incorporating key elements observed in real-world scenarios. Furthermore, we introduce a graph neural network–double deep Q-network (GNN-DDQN) agent to make caching decisions for each node based on the user request history. Simulation results demonstrate that our caching strategy achieves a cache hit ratio 34.42% higher than the state-of-the-art policy. We also establish the robustness of our approach, consistently outperforming various benchmark strategies.

Full article

(This article belongs to the Special Issue Recent Advances in Information-Centric Networks (ICNs))

►▼

Show Figures

Figure 1

Open AccessArticle

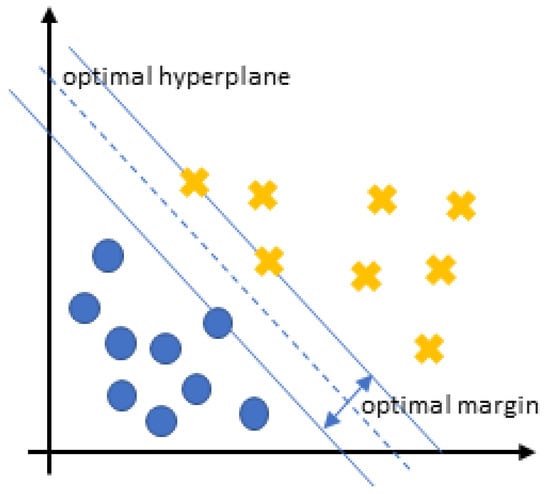

A Novel Approach for Fraud Detection in Blockchain-Based Healthcare Networks Using Machine Learning

Future Internet 2023, 15(8), 250; https://doi.org/10.3390/fi15080250 - 26 Jul 2023

Abstract

Recently, the advent of blockchain (BC) has sparked a digital revolution in different fields, such as finance, healthcare, and supply chain. It is used by smart healthcare systems to provide transparency and control for personal medical records. However, BC and healthcare integration still

[...] Read more.

Recently, the advent of blockchain (BC) has sparked a digital revolution in different fields, such as finance, healthcare, and supply chain. It is used by smart healthcare systems to provide transparency and control for personal medical records. However, BC and healthcare integration still face many challenges, such as storing patient data and privacy and security issues. In the context of security, new attacks target different parts of the BC network, such as nodes, consensus algorithms, Smart Contracts (SC), and wallets. Fraudulent data insertion can have serious consequences on the integrity and reliability of the BC, as it can compromise the trustworthiness of the information stored on it and lead to incorrect or misleading transactions. Detecting and preventing fraudulent data insertion is crucial for maintaining the credibility of the BC as a secure and transparent system for recording and verifying transactions. SCs control the transfer of assets, which is why they may be subject to several adverbial attacks. Therefore, many efforts have been proposed to detect vulnerabilities and attacks in the SCs, such as utilizing programming tools. However, their proposals are inadequate against the newly emerging vulnerabilities and attacks. Artificial Intelligence technology is robust in analyzing and detecting new attacks in every part of the BC network. Therefore, this article proposes a system architecture for detecting fraudulent transactions and attacks in the BC network based on Machine Learning (ML). It is composed of two stages: (1) Using ML to check medical data from sensors and block abnormal data from entering the blockchain network. (2) Using the same ML to check transactions in the blockchain, storing normal transactions, and marking abnormal ones as novel attacks in the attacks database. To build our system, we utilized two datasets and six machine learning algorithms (Logistic Regression, Decision Tree, KNN, Naive Bayes, SVM, and Random Forest). The results demonstrate that the Random Forest algorithm outperformed others by achieving the highest accuracy, execution time, and scalability. Thereby, it was considered the best solution among the rest of the algorithms for tackling the research problem. Moreover, the security analysis of the proposed system proves its robustness against several attacks which threaten the functioning of the blockchain-based healthcare application.

Full article

(This article belongs to the Section Cybersecurity)

►▼

Show Figures

Figure 1

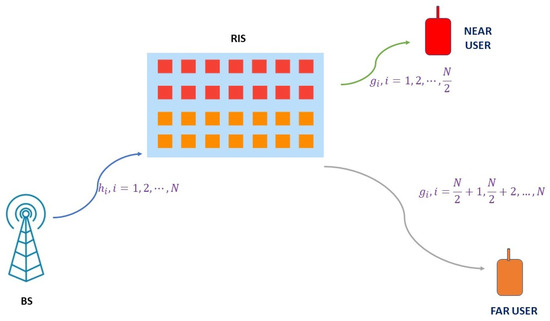

Open AccessArticle

RIS-Assisted Fixed NOMA: Outage Probability Analysis and Transmit Power Optimization

by

, , , , , and

Future Internet 2023, 15(8), 249; https://doi.org/10.3390/fi15080249 - 25 Jul 2023

Abstract

Reconfigurable intelligent surface (RIS)-assisted non-orthogonal multiple access (NOMA) has the ability to overcome the challenges of the wireless environment like random fluctuations, shadowing, and mobility in an energy efficient way when compared to multiple input-multiple output (MIMO)-NOMA systems. The NOMA system can deliver

[...] Read more.

Reconfigurable intelligent surface (RIS)-assisted non-orthogonal multiple access (NOMA) has the ability to overcome the challenges of the wireless environment like random fluctuations, shadowing, and mobility in an energy efficient way when compared to multiple input-multiple output (MIMO)-NOMA systems. The NOMA system can deliver controlled channel gains, improved coverage, increased energy efficiency, and enhanced fairness in resource allocation with the help of RIS. RIS-assisted NOMA will be one of the primary potential components of sixth-generation (6G) networks, due to its appealing advantages. The analytical outage probability expressions for smart RIS-assisted fixed NOMA (FNOMA) are derived in this paper, taking into account the instances of RIS as a smart reflector (SR) and an access point (AP). The analytical and simulation findings are found to be extremely comparable. In order to effectively maximize the sum capacity, the formulas for optimal powers to be assigned for a two-user case are also established. According to simulations, RIS-assisted FNOMA surpasses FNOMA in terms of outage and sum capacity. With the aid of RIS and the optimal power assignment, RIS-AP-FNOMA offers ≈62% improvement in sum capacity over the FNOMA system for a signal-to-noise ratio (SNR) of 10 dB and 32 elements in RIS. A significant improvement is also brought about by the increase in reflective elements.

Full article

(This article belongs to the Special Issue Moving towards 6G Wireless Technologies)

►▼

Show Figures

Figure 1

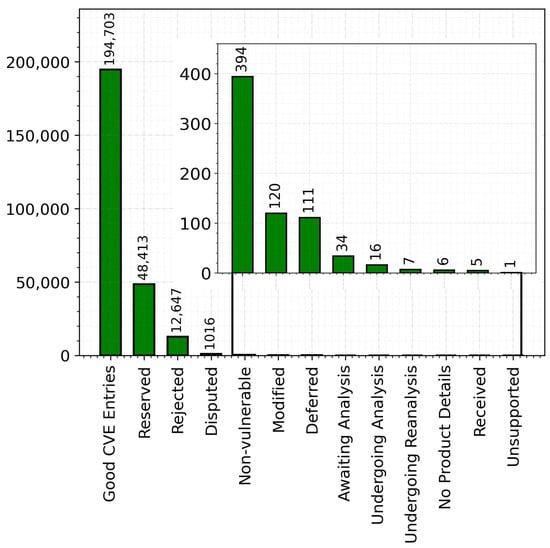

Open AccessArticle

Unveiling the Landscape of Operating System Vulnerabilities

by

and

Future Internet 2023, 15(7), 248; https://doi.org/10.3390/fi15070248 - 24 Jul 2023

Abstract

Operating systems play a crucial role in computer systems, serving as the fundamental infrastructure that supports a wide range of applications and services. However, they are also prime targets for malicious actors seeking to exploit vulnerabilities and compromise system security. This is a

[...] Read more.

Operating systems play a crucial role in computer systems, serving as the fundamental infrastructure that supports a wide range of applications and services. However, they are also prime targets for malicious actors seeking to exploit vulnerabilities and compromise system security. This is a crucial area that requires active research; however, OS vulnerabilities have not been actively studied in recent years. Therefore, we conduct a comprehensive analysis of OS vulnerabilities, aiming to enhance the understanding of their trends, severity, and common weaknesses. Our research methodology encompasses data preparation, sampling of vulnerable OS categories and versions, and an in-depth analysis of trends, severity levels, and types of OS vulnerabilities. We scrape the high-level data from reliable and recognized sources to generate two refined OS vulnerability datasets: one for OS categories and another for OS versions. Our study reveals the susceptibility of popular operating systems such as Windows, Windows Server, Debian Linux, and Mac OS. Specifically, Windows 10, Windows 11, Android (v11.0, v12.0, v13.0), Windows Server 2012, Debian Linux (v10.0, v11.0), Fedora 37, and HarmonyOS 2, are identified as the most vulnerable OS versions in recent years (2021–2022). Notably, these vulnerabilities exhibit a high severity, with maximum CVSS scores falling into the 7–8 and 9–10 range. Common vulnerability types, including CWE-119, CWE-20, CWE-200, and CWE-787, are prevalent in these OSs and require specific attention from OS vendors. The findings on trends, severity, and types of OS vulnerabilities from this research will serve as a valuable resource for vendors, security professionals, and end-users, empowering them to enhance OS security measures, prioritize vulnerability management efforts, and make informed decisions to mitigate risks associated with these vulnerabilities.

Full article

(This article belongs to the Section Smart System Infrastructure and Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

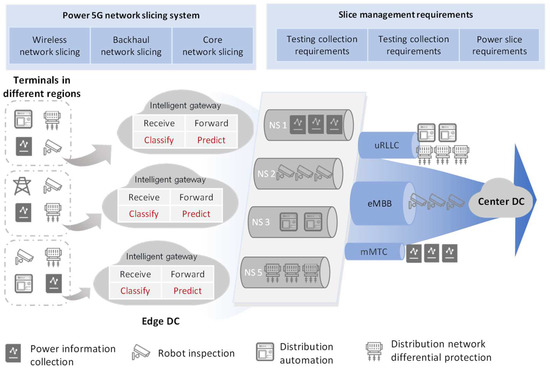

A Carrying Method for 5G Network Slicing in Smart Grid Communication Services Based on Neural Network

Future Internet 2023, 15(7), 247; https://doi.org/10.3390/fi15070247 - 20 Jul 2023

Abstract

When applying 5G network slicing technology, the operator’s network resources in the form of mutually isolated logical network slices provide specific service requirements and quality of service guarantees for smart grid communication services. In the face of the new situation of 5G, which

[...] Read more.

When applying 5G network slicing technology, the operator’s network resources in the form of mutually isolated logical network slices provide specific service requirements and quality of service guarantees for smart grid communication services. In the face of the new situation of 5G, which comprises the surge in demand for smart grid communication services and service types, as well as the digital and intelligent development of communication networks, it is even more important to provide a self-intelligent resource allocation and carrying method when slicing resources are allocated. To this end, a carrying method based on a neural network is proposed. The objective is to establish a hierarchical scheduling system for smart grid communication services at the power smart gate-way at the edge, where intelligent classification matching of smart grid communication services to (i) adapt to the characteristics of 5G network slicing and (ii) dynamic prediction of traffic in the slicing network are both realized. This hierarchical scheduling system extracts the data features of the services and encodes the data through a one-dimensional Convolutional Neural Network (1D CNN) in order to achieve intelligent classification and matching of smart grid communication services. This system also combines with Bidirectional Long Short-Term Memory Neural Network (BILSTM) in order to achieve a dynamic prediction of time-series based traffic in the slicing network. The simulation results validate the feasibility of a service classification model based on a 1D CNN and a traffic prediction model based on BILSTM for smart grid communication services.

Full article

(This article belongs to the Special Issue Edge AI: Applications of Edge Computing and Artificial Intelligence in IoT)

►▼

Show Figures

Figure 1

Open AccessArticle

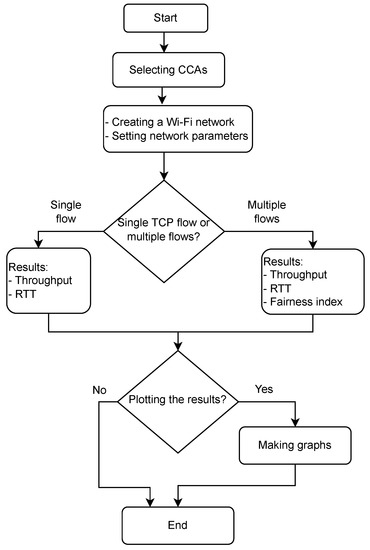

An Accurate Platform for Investigating TCP Performance in Wi-Fi Networks

by

, , , , , and

Future Internet 2023, 15(7), 246; https://doi.org/10.3390/fi15070246 - 19 Jul 2023

Abstract

An increasing number of devices are connecting to the Internet via Wi-Fi networks, ranging from mobile phones to Internet of Things (IoT) devices. Moreover, Wi-Fi technology has undergone gradual development, with various standards and implementations. In a Wi-Fi network, a Wi-Fi client typically

[...] Read more.

An increasing number of devices are connecting to the Internet via Wi-Fi networks, ranging from mobile phones to Internet of Things (IoT) devices. Moreover, Wi-Fi technology has undergone gradual development, with various standards and implementations. In a Wi-Fi network, a Wi-Fi client typically uses the Transmission Control Protocol (TCP) for its applications. Hence, it is essential to understand and quantify the TCP performance in such an environment. This work presents an emulator-based approach for investigating the TCP performance in Wi-Fi networks in a time- and cost-efficient manner. We introduce a new platform, which leverages the Mininet-WiFi emulator to construct various Wi-Fi networks for investigation while considering actual TCP implementations. The platform uniquely includes tools and scripts to assess TCP performance in the Wi-Fi networks quickly. First, to confirm the accuracy of our platform, we compare the emulated results to the results in a real Wi-Fi network, where the bufferbloat problem may occur. The two results are not only similar but also usable for finding the bufferbloat condition under different methods of TCP congestion control. Second, we conduct a similar evaluation in scenarios with the Wi-Fi link as a bottleneck and those with varying signal strengths. Third, we use the platform to compare the fairness performance of TCP congestion control algorithms in a Wi-Fi network with multiple clients. The results show the efficiency and convenience of our platform in recognizing TCP behaviors.

Full article

(This article belongs to the Special Issue State-of-the-Art Future Internet Technology in Japan 2022-2023)

►▼

Show Figures

Figure 1

Open AccessArticle

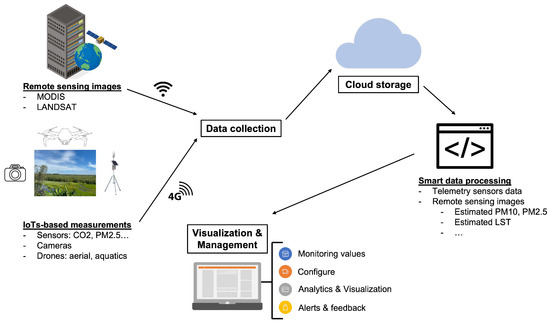

An IoT System and MODIS Images Enable Smart Environmental Management for Mekong Delta

Future Internet 2023, 15(7), 245; https://doi.org/10.3390/fi15070245 - 18 Jul 2023

Abstract

►▼

Show Figures

The smart environmental management system proposed in this work offers a new approach to environmental monitoring by utilizing data from IoT stations and MODIS satellite imagery. The system is designed to be deployed in vast regions, such as the Mekong Delta, with low

[...] Read more.

The smart environmental management system proposed in this work offers a new approach to environmental monitoring by utilizing data from IoT stations and MODIS satellite imagery. The system is designed to be deployed in vast regions, such as the Mekong Delta, with low building and operating costs, making it a cost-effective solution for environmental monitoring. The system leverages telemetry data collected by IoT stations in combination with MODIS MOD09GA, MOD11A1, and MCD19A2 daily image products to develop computational models that calculate the values land surface temperature (LST), 2.5 and 10 (µm) particulate matter mass concentrations (PM2.5 and PM10) in areas without IoT stations. The MOD09GA product provides land surface spectral reflectance from visible to shortwave infrared wavelengths to determine land cover types. The MOD11A1 product provides thermal infrared emission from the land surface to compute LST. The MCD19A2 product provides aerosol optical depth values to detect the presence of atmospheric aerosols, e.g., PM2.5 and PM10. The collected data, including remote sensing images and telemetry sensor data, are preprocessed to eliminate redundancy and stored in cloud storage services for further processing. This allows for automatic retrieval and computation of the data by the smart data processing engine, which is designed to process various data types including images and videos from cameras and drones. The calculated values are then made available through a graphic user interface (GUI) that can be accessed through both desktop and mobile devices. The GUI provides real-time visualization of the monitoring values, as well as alerts to administrators based on predetermined rules and values of the data. This allows administrators to easily monitor the system, configure the system by setting alerting rules or calibrating the ground stations, and take appropriate action in response to alerts. Experimental results from the implementation of the system in Dong Thap Province in the Mekong Delta show that the linear regression models for PM2.5 and PM10 estimations from MCD19A2 AOD values have correlation coefficients of 0.81 and 0.68, and RMSEs of 4.11 and 5.74 µg/m

Figure 1

Open AccessReview

Self-Healing in Cyber–Physical Systems Using Machine Learning: A Critical Analysis of Theories and Tools

by

, , , , , and

Future Internet 2023, 15(7), 244; https://doi.org/10.3390/fi15070244 - 17 Jul 2023

Abstract

The rapid advancement of networking, computing, sensing, and control systems has introduced a wide range of cyber threats, including those from new devices deployed during the development of scenarios. With recent advancements in automobiles, medical devices, smart industrial systems, and other technologies, system

[...] Read more.

The rapid advancement of networking, computing, sensing, and control systems has introduced a wide range of cyber threats, including those from new devices deployed during the development of scenarios. With recent advancements in automobiles, medical devices, smart industrial systems, and other technologies, system failures resulting from external attacks or internal process malfunctions are increasingly common. Restoring the system’s stable state requires autonomous intervention through the self-healing process to maintain service quality. This paper, therefore, aims to analyse state of the art and identify where self-healing using machine learning can be applied to cyber–physical systems to enhance security and prevent failures within the system. The paper describes three key components of self-healing functionality in computer systems: anomaly detection, fault alert, and fault auto-remediation. The significance of these components is that self-healing functionality cannot be practical without considering all three. Understanding the self-healing theories that form the guiding principles for implementing these functionalities with real-life implications is crucial. There are strong indications that self-healing functionality in the cyber–physical system is an emerging area of research that holds great promise for the future of computing technology. It has the potential to provide seamless self-organising and self-restoration functionality to cyber–physical systems, leading to increased security of systems and improved user experience. For instance, a functional self-healing system implemented on a power grid will react autonomously when a threat or fault occurs, without requiring human intervention to restore power to communities and preserve critical services after power outages or defects. This paper presents the existing vulnerabilities, threats, and challenges and critically analyses the current self-healing theories and methods that use machine learning for cyber–physical systems.

Full article

(This article belongs to the Section Smart System Infrastructure and Applications)

►▼

Show Figures

Figure 1

Journal Menu

► ▼ Journal Menu-

- Future Internet Home

- Aims & Scope

- Editorial Board

- Reviewer Board

- Topical Advisory Panel

- Instructions for Authors

- Special Issues

- Topics

- Sections & Collections

- Article Processing Charge

- Indexing & Archiving

- Editor’s Choice Articles

- Most Cited & Viewed

- Journal Statistics

- Journal History

- Journal Awards

- Editorial Office

Journal Browser

► ▼ Journal BrowserHighly Accessed Articles

Latest Books

E-Mail Alert

News

31 July 2023

MDPI’s 2022 Best PhD Thesis Awards in Computer Science and Mathematics—Winners Announced

MDPI’s 2022 Best PhD Thesis Awards in Computer Science and Mathematics—Winners Announced

31 July 2023

MDPI’s 2022 Young Investigator Awards in Computer Science and Mathematics—Winners Announced

MDPI’s 2022 Young Investigator Awards in Computer Science and Mathematics—Winners Announced

Topics

Topic in

Applied Sciences, Electronics, Future Internet, JCP, Sensors

Cyber-Physical Security for IoT Systems

Topic Editors: Keping Yu, Chinmay ChakrabortyDeadline: 30 August 2023

Topic in

Entropy, Future Internet, Healthcare, MAKE, Sensors

Communications Challenges in Health and Well-Being

Topic Editors: Dragana Bajic, Konstantinos Katzis, Gordana GardasevicDeadline: 30 November 2023

Topic in

Algorithms, Entropy, Future Internet, Mathematics, Symmetry

Complex Systems and Network Science

Topic Editors: Massimo Marchiori, Latora VitoDeadline: 31 December 2023

Topic in

Administrative Sciences, Future Internet, Information, Smart Cities, Social Sciences, Technologies, Urban Science

From ChatGPT to GovGPT: The Future of Digital Government

Topic Editors: Liang Ma, Yueping Zheng, Ziteng FanDeadline: 31 January 2024

Conferences

Special Issues

Special Issue in

Future Internet

Future Intelligent Vehicular Networks toward 6G

Guest Editors: Paulo Mendes, Eduardo Cerqueira, Denis RosárioDeadline: 25 August 2023

Special Issue in

Future Internet

Artificial Intelligence (AI) and Big Data Technologies for Designing 6G Networks to Enable Future Networked Societies

Guest Editors: Rashid Mehmood, Mohsen Maadani, Eduardo Cerqueira, Gyu Myoung LeeDeadline: 31 August 2023

Special Issue in

Future Internet

6G Wireless Communication Systems: Applications, Opportunities and Challenges II

Guest Editors: Raed A. Abd-Alhameed, Kelvin Anoh, Yousef Dama, Simeon Keates, Chan Hwang SeeDeadline: 20 September 2023

Special Issue in

Future Internet

6G Wireless Channel Measurements and Models: Trends and Challenges

Guest Editor: Seong Ki YooDeadline: 30 September 2023

Topical Collections

Topical Collection in

Future Internet

Featured Reviews of Future Internet Research

Collection Editor: Dino Giuli

Topical Collection in

Future Internet

5G/6G Networks for the Internet of Things: Communication Technologies and Challenges

Collection Editor: Sachin Sharma

Topical Collection in

Future Internet

Computer Vision, Deep Learning and Machine Learning with Applications

Collection Editors: Remus Bard, Arpad Gellert

Topical Collection in

Future Internet

Innovative People-Centered Solutions Applied to Industries, Cities and Societies

Collection Editors: Dino Giuli, Filipe Portela