Journal Description

Computation

Computation

is a peer-reviewed journal of computational science and engineering published monthly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), CAPlus / SciFinder, Inspec, dblp, and other databases.

- Journal Rank: CiteScore - Q2 (Applied Mathematics)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 16.3 days after submission; acceptance to publication is undertaken in 3.7 days (median values for papers published in this journal in the first half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Impact Factor:

2.2 (2022);

5-Year Impact Factor:

2.2 (2022)

Latest Articles

A Parametric Family of Triangular Norms and Conorms with an Additive Generator in the Form of an Arctangent of a Linear Fractional Function

Computation 2023, 11(8), 155; https://doi.org/10.3390/computation11080155 - 08 Aug 2023

Abstract

At present, fuzzy modeling has established itself as an effective tool for designing and developing systems for various purposes that are used to solve problems of control, diagnostics, forecasting, and decision making. One of the most important problems is the choice and justification

[...] Read more.

At present, fuzzy modeling has established itself as an effective tool for designing and developing systems for various purposes that are used to solve problems of control, diagnostics, forecasting, and decision making. One of the most important problems is the choice and justification of an appropriate functional representation of the main fuzzy operations. It is known that, in the class of rational functions, such operations can be represented by additive generators in the form of a linear fractional function, a logarithm of a linear fractional function, and an arctangent of a linear fractional function. The paper is devoted to the latter case. Restrictions on the parameters, under which the arctangent of a linear fractional function is an increasing or decreasing generator, are defined. For each case, a corresponding fuzzy operation (a triangular norm or a conorm) is constructed. The theoretical significance of the research results lies in the fact that the obtained parametric families enrich the theory of Archimedean triangular norms and conorms and provide additional opportunities for the functional representation of fuzzy operations in the framework of fuzzy modeling. In addition, in fact, we formed a scheme for study functions that can be considered additive generators and constructed the corresponding fuzzy operations.

Full article

(This article belongs to the Special Issue Control Systems, Mathematical Modeling and Automation II)

Open AccessArticle

Revealing the Genetic Code Symmetries through Computations Involving Fibonacci-like Sequences and Their Properties

Computation 2023, 11(8), 154; https://doi.org/10.3390/computation11080154 - 07 Aug 2023

Abstract

In this work, we present a new way of studying the mathematical structure of the genetic code. This study relies on the use of mathematical computations involving five Fibonacci-like sequences; a few of their “seeds” or “initial conditions” are chosen according to the

[...] Read more.

In this work, we present a new way of studying the mathematical structure of the genetic code. This study relies on the use of mathematical computations involving five Fibonacci-like sequences; a few of their “seeds” or “initial conditions” are chosen according to the chemical and physical data of the three amino acids serine, arginine and leucine, playing a prominent role in a recent symmetry classification scheme of the genetic code. It appears that these mathematical sequences, of the same kind as the famous Fibonacci series, apart from their usual recurrence relations, are highly intertwined by many useful linear relationships. Using these sequences and also various sums or linear combinations of them, we derive several physical and chemical quantities of interest, such as the number of total coding codons, 61, obeying various degeneracy patterns, the detailed number of H/CNOS atoms and the integer molecular mass (or nucleon number), in the side chains of the coded amino acids and also in various degeneracy patterns, in agreement with those described in the literature. We also discover, as a by-product, an accurate description of the very chemical structure of the four ribonucleotides uridine monophosphate (UMP), cytidine monophosphate (CMP), adenosine monophosphate (AMP) and guanosine monophosphate (GMP), the building blocks of RNA whose groupings, in three units, constitute the triplet codons. In summary, we find a full mathematical and chemical connection with the “ideal sextet’s classification scheme”, which we alluded to above, as well as with others—notably, the Findley–Findley–McGlynn and Rumer’s symmetrical classifications.

Full article

(This article belongs to the Special Issue Computations in Mathematics, Mathematical Education, and Science)

Open AccessArticle

Uncoupling Techniques for Multispecies Diffusion–Reaction Model

Computation 2023, 11(8), 153; https://doi.org/10.3390/computation11080153 - 04 Aug 2023

Abstract

►▼

Show Figures

We consider the multispecies model described by a coupled system of diffusion–reaction equations, where the coupling and nonlinearity are given in the reaction part. We construct a semi-discrete form using a finite volume approximation by space. The fully implicit scheme is used for

[...] Read more.

We consider the multispecies model described by a coupled system of diffusion–reaction equations, where the coupling and nonlinearity are given in the reaction part. We construct a semi-discrete form using a finite volume approximation by space. The fully implicit scheme is used for approximation by time, which leads to solving the coupled nonlinear system of equations at each time step. This paper presents two uncoupling techniques based on the explicit–implicit scheme and the operator-splitting method. In the explicit–implicit scheme, we take the concentration of one species in coupling term from the previous time layer to obtain a linear uncoupled system of equations. The second approach is based on the operator-splitting technique, where we first solve uncoupled equations with the diffusion operator and then solve the equations with the local reaction operator. The stability estimates are derived for both proposed uncoupling schemes. We present a numerical investigation for the uncoupling techniques with varying time step sizes and different scales of the diffusion coefficient.

Full article

Figure 1

Open AccessArticle

Enhancing the Hardware Pipelining Optimization Technique of the SHA-3 via FPGA

by

and

Computation 2023, 11(8), 152; https://doi.org/10.3390/computation11080152 - 03 Aug 2023

Abstract

►▼

Show Figures

Information is transmitted between multiple insecure routing hops in text, image, video, and audio. Thus, this multi-hop digital data transfer makes secure transmission with confidentiality and integrity imperative. This protection of the transmitted data can be achieved via hashing algorithms. Furthermore, data integrity

[...] Read more.

Information is transmitted between multiple insecure routing hops in text, image, video, and audio. Thus, this multi-hop digital data transfer makes secure transmission with confidentiality and integrity imperative. This protection of the transmitted data can be achieved via hashing algorithms. Furthermore, data integrity must be ensured, which is feasible using hashing algorithms. The advanced cryptographic Secure Hashing Algorithm 3 (SHA-3) is not sensitive to a cryptanalysis attack and is widely preferred due to its long-term security in various applications. However, due to the ever-increasing size of the data to be transmitted, an effective improvement is required to fulfill real-time computations with multiple types of optimization. The use of FPGAs is the ideal mechanism to improve algorithm performance and other metrics, such as throughput (Gbps), frequency (MHz), efficiency (Mbps/slices), reduction of area (slices), and power consumption. Providing upgraded computer architectures for SHA-3 is an active area of research, with continuous performance improvements. In this article, we have focused on enhancing the hardware performance metrics of throughput and efficiency by reducing the area cost of the SHA-3 for all output size lengths (224, 256, 384, and 512 bits). Our approach introduces a novel architectural design based on pipelining, which is combined with a simplified format for the round constant (RC) generator in the Iota (

Figure 1

Open AccessArticle

Finite Element Analysis of ACL Reconstruction-Compatible Knee Implant Design with Bone Graft Component

Computation 2023, 11(8), 151; https://doi.org/10.3390/computation11080151 - 02 Aug 2023

Abstract

Knee osteoarthritis is a musculoskeletal defect specific to the soft tissues in the knee joint and is a degenerative disease that affects millions of people. Although drug intake can slow down progression, total knee arthroplasty has been the gold standard for the treatment

[...] Read more.

Knee osteoarthritis is a musculoskeletal defect specific to the soft tissues in the knee joint and is a degenerative disease that affects millions of people. Although drug intake can slow down progression, total knee arthroplasty has been the gold standard for the treatment of this disease. This surgical procedure involves replacing the tibiofemoral joint with an implant. The most common implants used for this require the removal of either the anterior cruciate ligament (ACL) alone or both cruciate ligaments which alters the native knee joint mechanics. Bi-cruciate-retaining implants have been developed but not frequently used due to the complexity of the procedure and the occurrences of intraoperative failures such as ACL and tibial eminence rupture. In this study, a knee joint implant was modified to have a bone graft that should aid in ACL reconstruction. The mechanical behavior of the bone graft was studied through finite element analysis (FEA). The results show that the peak Christensen safety factor for cortical bone is 0.021 while the maximum shear stress of the cancellous bone is 3 MPa which signifies that the cancellous bone could fail when subjected to the ACL loads, depending on the graft shear strength which could vary depending on the graft source, while cortical bone could withstand the walking load. It would be necessary to optimize the bone graft geometry for stress distribution as well as to evaluate the effectiveness of bone healing prior to implementation.

Full article

(This article belongs to the Section Computational Engineering)

►▼

Show Figures

Figure 1

Open AccessArticle

The Problem of Effective Evacuation of the Population from Floodplains under Threat of Flooding: Algorithmic and Software Support with Shortage of Resources

by

, , , , and

Computation 2023, 11(8), 150; https://doi.org/10.3390/computation11080150 - 01 Aug 2023

Abstract

Extreme flooding of the floodplains of large lowland rivers poses a danger to the population due to the vastness of the flooded areas. This requires the organization of safe evacuation in conditions of a shortage of temporary and transport resources due to significant

[...] Read more.

Extreme flooding of the floodplains of large lowland rivers poses a danger to the population due to the vastness of the flooded areas. This requires the organization of safe evacuation in conditions of a shortage of temporary and transport resources due to significant differences in the moments of flooding of different spatial parts. We consider the case of a shortage of evacuation vehicles, in which the safe evacuation of the entire population to permanent evacuation points is impossible. Therefore, the evacuation is divided into two stages with the organization of temporary evacuation points on evacuation routes. Our goal is to develop a method for analyzing the minimum resource requirement for the safe evacuation of the population of floodplain territories based on a mathematical model of flood dynamics and minimizing the number of vehicles on a set of safe evacuation schedules. The core of the approach is a numerical hydrodynamic model in shallow water approximation. Modeling the hydrological regime of a real water body requires a multi-layer geoinformation model of the territory with layers of relief, channel structure, and social infrastructure. High-performance computing is performed on GPUs using CUDA. The optimization problem is a variant of the resource investment problem of scheduling theory with deadlines for completing work and is solved on the basis of a heuristic algorithm. We use the results of numerical simulation of floods for the Northern part of the Volga-Akhtuba floodplain to plot the dependence of the minimum number of vehicles that ensure the safe evacuation of the population. The minimum transport resources depend on the water discharge in the Volga river, the start of the evacuation, and the localization of temporary evacuation points. The developed algorithm constructs a set of safe evacuation schedules for the minimum allowable number of vehicles in various flood scenarios. The population evacuation schedules constructed for the Volga-Akhtuba floodplain can be used in practice for various vast river valleys.

Full article

(This article belongs to the Special Issue Control Systems, Mathematical Modeling and Automation II)

►▼

Show Figures

Figure 1

Open AccessArticle

Adaptive Sparse Grids with Nonlinear Basis in Interval Problems for Dynamical Systems

Computation 2023, 11(8), 149; https://doi.org/10.3390/computation11080149 - 01 Aug 2023

Abstract

►▼

Show Figures

Problems with interval uncertainties arise in many applied fields. The authors have earlier developed, tested, and proved an adaptive interpolation algorithm for solving this class of problems. The algorithm’s idea consists of constructing a piecewise polynomial function that interpolates the dependence of the

[...] Read more.

Problems with interval uncertainties arise in many applied fields. The authors have earlier developed, tested, and proved an adaptive interpolation algorithm for solving this class of problems. The algorithm’s idea consists of constructing a piecewise polynomial function that interpolates the dependence of the problem solution on point values of interval parameters. The classical version of the algorithm uses polynomial full grid interpolation and, with a large number of uncertainties, the algorithm becomes difficult to apply due to the exponential growth of computational costs. Sparse grid interpolation requires significantly less computational resources than interpolation on full grids, so their use seems promising. A representative number of examples have previously confirmed the effectiveness of using adaptive sparse grids with a linear basis in the adaptive interpolation algorithm. The purpose of this paper is to apply adaptive sparse grids with a nonlinear basis for modeling dynamic systems with interval parameters. The corresponding interpolation polynomials on the quadratic basis and the fourth-degree basis are constructed. The efficiency, performance, and robustness of the proposed approach are demonstrated on a representative set of problems.

Full article

Graphical abstract

Open AccessArticle

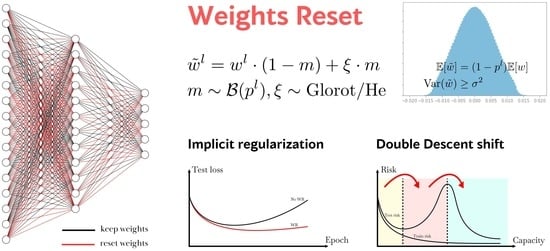

The Weights Reset Technique for Deep Neural Networks Implicit Regularization

Computation 2023, 11(8), 148; https://doi.org/10.3390/computation11080148 - 01 Aug 2023

Abstract

►▼

Show Figures

We present a new regularization method called Weights Reset, which includes periodically resetting a random portion of layer weights during the training process using predefined probability distributions. This technique was applied and tested on several popular classification datasets, Caltech-101, CIFAR-100 and Imagenette. We

[...] Read more.

We present a new regularization method called Weights Reset, which includes periodically resetting a random portion of layer weights during the training process using predefined probability distributions. This technique was applied and tested on several popular classification datasets, Caltech-101, CIFAR-100 and Imagenette. We compare these results with other traditional regularization methods. The subsequent test results demonstrate that the Weights Reset method is competitive, achieving the best performance on Imagenette dataset and the challenging and unbalanced Caltech-101 dataset. This method also has sufficient potential to prevent vanishing and exploding gradients. However, this analysis is of a brief nature. Further comprehensive studies are needed in order to gain a deep understanding of the computing potential and limitations of the Weights Reset method. The observed results show that the Weights Reset method can be estimated as an effective extension of the traditional regularization methods and can help to improve model performance and generalization.

Full article

Graphical abstract

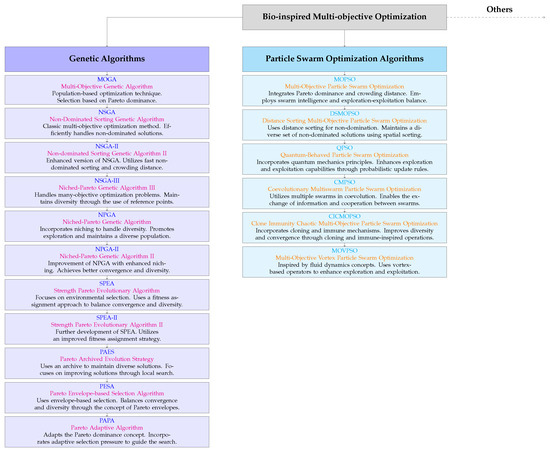

Open AccessArticle

Multiobjective Optimization of Fuzzy System for Cardiovascular Risk Classification

Computation 2023, 11(7), 147; https://doi.org/10.3390/computation11070147 - 23 Jul 2023

Abstract

►▼

Show Figures

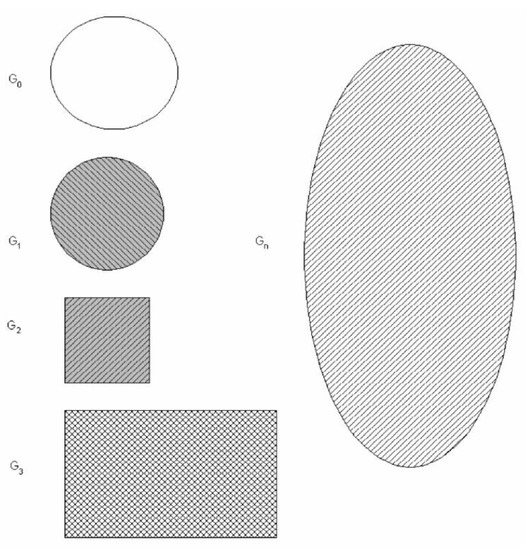

Since cardiovascular diseases (CVDs) pose a critical global concern, identifying associated risk factors remains a pivotal research focus. This study aims to propose and optimize a fuzzy system for cardiovascular risk (CVR) classification using a multiobjective approach, addressing computational aspects such as the

[...] Read more.

Since cardiovascular diseases (CVDs) pose a critical global concern, identifying associated risk factors remains a pivotal research focus. This study aims to propose and optimize a fuzzy system for cardiovascular risk (CVR) classification using a multiobjective approach, addressing computational aspects such as the configuration of the fuzzy system, the optimization process, the selection of a suitable solution from the optimal Pareto front, and the interpretability of the fuzzy logic system after the optimization process. The proposed system utilizes data, including age, weight, height, gender, and systolic blood pressure to determine cardiovascular risk. The fuzzy model is based on preliminary information from the literature; therefore, to adjust the fuzzy logic system using a multiobjective approach, the body mass index (BMI) is considered as an additional output as data are available for this index, and body mass index is acknowledged as a proxy for cardiovascular risk given the propensity for these diseases attributed to surplus adipose tissue, which can elevate blood pressure, cholesterol, and triglyceride levels, leading to arterial and cardiac damage. By employing a multiobjective approach, the study aims to obtain a balance between the two outputs corresponding to cardiovascular risk classification and body mass index. For the multiobjective optimization, a set of experiments is proposed that render an optimal Pareto front, as a result, to later determine the appropriate solution. The results show an adequate optimization of the fuzzy logic system, allowing the interpretability of the fuzzy sets after carrying out the optimization process. In this way, this paper contributes to the advancement of the use of computational techniques in the medical domain.

Full article

Figure 1

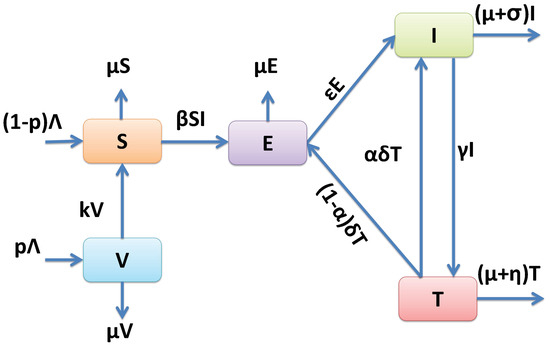

Open AccessArticle

Analysis of the Dynamics of Tuberculosis in Algeria Using a Compartmental VSEIT Model with Evaluation of the Vaccination and Treatment Effects

Computation 2023, 11(7), 146; https://doi.org/10.3390/computation11070146 - 21 Jul 2023

Abstract

Despite low tuberculosis (TB) mortality rates in China, Europe, and the United States, many countries are still struggling to control the epidemic, including India, South Africa, and Algeria. This study aims to contribute to the body of knowledge on this topic and provide

[...] Read more.

Despite low tuberculosis (TB) mortality rates in China, Europe, and the United States, many countries are still struggling to control the epidemic, including India, South Africa, and Algeria. This study aims to contribute to the body of knowledge on this topic and provide a valuable tool and evidence-based guidance for the Algerian healthcare managers in understanding the spread of TB and implementing control strategies. For this purpose, a compartmental mathematical model is proposed to analyze TB dynamics in Algeria and investigate the vaccination and treatment effects on disease breaks. A qualitative study is conducted to discuss the stability property of both disease-free equilibrium and endemic equilibrium. In order to adopt the proposed model for the Algerian case, we estimate the model parameters using Algerian TB-reported data from 1990 to 2020. The obtained results using the proposed mathematical compartmental model show that the reproduction number (

(This article belongs to the Special Issue Mathematical Modeling and Study of Nonlinear Dynamic Processes)

►▼

Show Figures

Figure 1

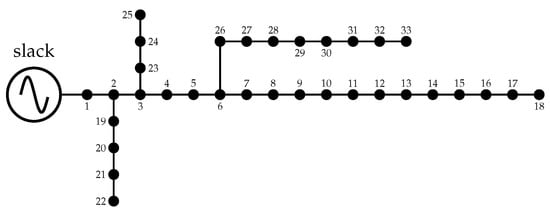

Open AccessArticle

Simultaneous Integration of D-STATCOMs and PV Sources in Distribution Networks to Reduce Annual Investment and Operating Costs

Computation 2023, 11(7), 145; https://doi.org/10.3390/computation11070145 - 20 Jul 2023

Abstract

This research analyzes electrical distribution networks using renewable generation sources based on photovoltaic (PV) sources and distribution static compensators (D-STATCOMs) in order to minimize the expected annual grid operating costs for a planning period of 20 years. The separate and simultaneous placement of

[...] Read more.

This research analyzes electrical distribution networks using renewable generation sources based on photovoltaic (PV) sources and distribution static compensators (D-STATCOMs) in order to minimize the expected annual grid operating costs for a planning period of 20 years. The separate and simultaneous placement of PVs and D-STATCOMs is evaluated through a mixed-integer nonlinear programming model (MINLP), whose binary part pertains to selecting the nodes where these devices must be located, and whose continuous part is associated with the power flow equations and device constraints. This optimization model is solved using the vortex search algorithm for the sake of comparison. Numerical results in the IEEE 33- and 69-bus grids demonstrate that combining PV sources and D-STATCOM devices entails the maximum reduction in the expected annual grid operating costs when compared to the solutions reached separately by each device, with expected reductions of about

(This article belongs to the Special Issue Applications of Statistics and Machine Learning in Electronics)

►▼

Show Figures

Figure 1

Open AccessArticle

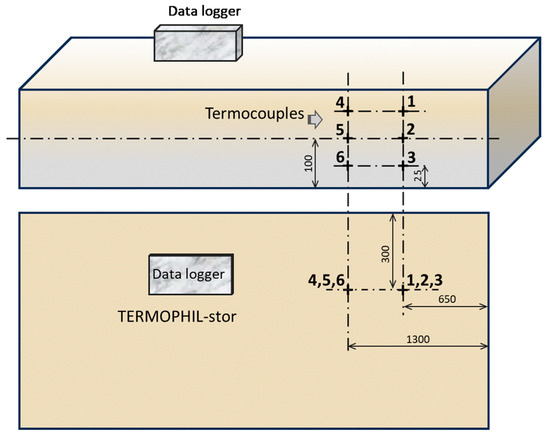

Modeling of Heat Flux in a Heating Furnace

Computation 2023, 11(7), 144; https://doi.org/10.3390/computation11070144 - 17 Jul 2023

Abstract

Modern heating furnaces use combined modes of heating the charge. At high heating temperatures, more radiation heating is used; at lower temperatures, more convection heating is used. In large heating furnaces, such as pusher furnaces, it is necessary to monitor the heating of

[...] Read more.

Modern heating furnaces use combined modes of heating the charge. At high heating temperatures, more radiation heating is used; at lower temperatures, more convection heating is used. In large heating furnaces, such as pusher furnaces, it is necessary to monitor the heating of the material zonally. Zonal heating allows the appropriate thermal regime to be set in each zone, according to the desired parameters for heating the charge. The problem for each heating furnace is to set the optimum thermal regime so that at the end of the heating, after the material has been cross-sectioned, there is a uniform temperature field with a minimum temperature differential. In order to evaluate the heating of the charge, a mathematical model was developed to calculate the heat fluxes of the moving charge (slabs) along the length of the pusher furnace. The obtained results are based on experimental measurements on a test slab on which thermocouples were installed, and data acquisition was provided by a TERMOPHIL-stor data logger placed directly on the slab. Most of the developed models focus only on energy balance assessment or external heat exchange. The results from the model created showed reserves for changing the thermal regimes in the different zones. The developed model was used to compare the heating evaluation of the slabs after the rebuilding of the pusher furnace. Changing the furnace parameters and altering the heat fluxes or heating regimes in each zone contributed to more uniform heating and a reduction in specific heat consumption. The developed mathematical heat flux model is applicable as part of the powerful tools for monitoring and controlling the thermal condition of the charge inside the furnace as well as evaluating the operating condition of such furnaces.

Full article

(This article belongs to the Special Issue 10th Anniversary of Computation—Computational Heat and Mass Transfer (ICCHMT 2023))

►▼

Show Figures

Figure 1

Open AccessArticle

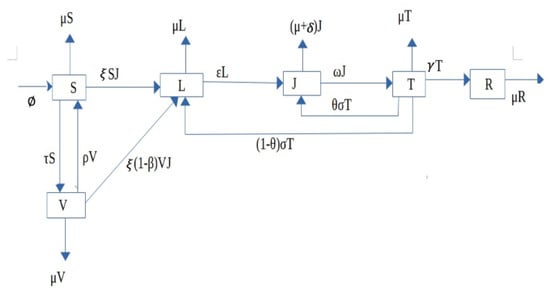

Mathematical Modelling of Tuberculosis Outbreak in an East African Country Incorporating Vaccination and Treatment

by

, , , , , , , , and

Computation 2023, 11(7), 143; https://doi.org/10.3390/computation11070143 - 17 Jul 2023

Abstract

In this paper, we develop a deterministic mathematical epidemic model for tuberculosis outbreaks in order to study the disease’s impact in a given population. We develop a qualitative analysis of the model by showing that the solution of the model is positive and

[...] Read more.

In this paper, we develop a deterministic mathematical epidemic model for tuberculosis outbreaks in order to study the disease’s impact in a given population. We develop a qualitative analysis of the model by showing that the solution of the model is positive and bounded. The global stability analysis of the model uses Lyapunov functions and the threshold quantity of the model, which is the basic reproduction number is estimated. The existence and uniqueness analysis for Caputo fractional tuberculosis outbreak model is presented by transforming the deterministic model to a Caputo sense model. The deterministic model is used to predict real data from Uganda and Rwanda to see how well our model captured the dynamics of the disease in the countries considered. Furthermore, the sensitivity analysis of the parameters according to

(This article belongs to the Special Issue Eco-Evolutionary and Computational Epidemiology Modeling of Complex Dynamical Systems)

►▼

Show Figures

Figure 1

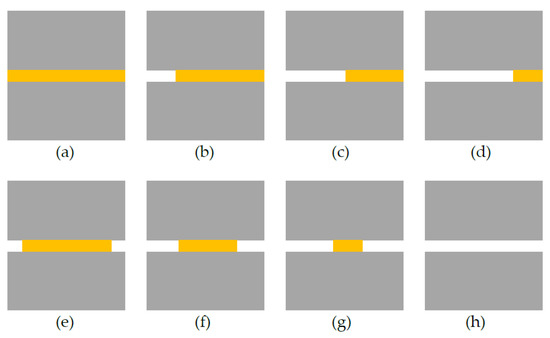

Open AccessArticle

Computational Fracture Modeling for Effects of Healed Crack Length and Interfacial Cohesive Properties in Self-Healing Concrete Using XFEM and Cohesive Surface Technique

by

and

Computation 2023, 11(7), 142; https://doi.org/10.3390/computation11070142 - 16 Jul 2023

Abstract

Healing patterns are a critical issue that influence the fracture mechanism of self-healing concrete (SHC) structures. Partial healing cracks could happen even during the normal operating conditions of the structure, such as sustainable applied loads or quick crack spreading. In this paper, the

[...] Read more.

Healing patterns are a critical issue that influence the fracture mechanism of self-healing concrete (SHC) structures. Partial healing cracks could happen even during the normal operating conditions of the structure, such as sustainable applied loads or quick crack spreading. In this paper, the effects of two main factors that control healing patterns, the healed crack length and the interfacial cohesive properties between the solidified healing agent and the cracked surfaces on the load carrying capacity and the fracture mechanism of healed SHC samples, are computationally investigated. The proposed computational modeling framework is based on the extended finite element method (XFEM) and cohesive surface (CS) technique to model the fracture and debonding mechanism of 2D healed SHC samples under a uniaxial tensile test. The interfacial cohesive properties and the healed crack length have significant effects on the load carrying capacity, the crack initiation, the propagation, and the debonding potential of the solidified healing agent from the concrete matrix. The higher their values, the higher the load carrying capacity. The solidified healing agent will be debonded from the concrete matrix when the interfacial cohesive properties are less than 25% of the fracture properties of the solidified healing agent.

Full article

(This article belongs to the Special Issue Application of Finite Element Methods)

►▼

Show Figures

Figure 1

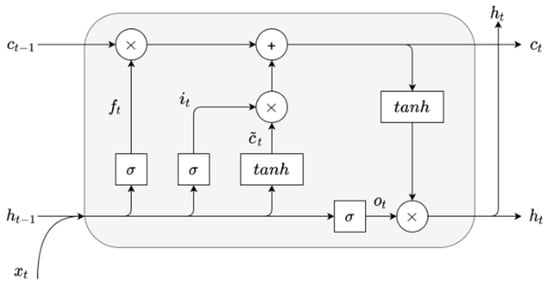

Open AccessArticle

Incorporating Time-Series Forecasting Techniques to Predict Logistics Companies’ Staffing Needs and Order Volume

Computation 2023, 11(7), 141; https://doi.org/10.3390/computation11070141 - 14 Jul 2023

Abstract

Time-series analysis is a widely used method for studying past data to make future predictions. This paper focuses on utilizing time-series analysis techniques to forecast the resource needs of logistics delivery companies, enabling them to meet their objectives and ensure sustained growth. The

[...] Read more.

Time-series analysis is a widely used method for studying past data to make future predictions. This paper focuses on utilizing time-series analysis techniques to forecast the resource needs of logistics delivery companies, enabling them to meet their objectives and ensure sustained growth. The study aims to build a model that optimizes the prediction of order volume during specific time periods and determines the staffing requirements for the company. The prediction of order volume in logistics companies involves analyzing trend and seasonality components in the data. Autoregressive (AR), Autoregressive Integrated Moving Average (ARIMA), and Seasonal Autoregressive Integrated Moving Average with Exogenous Variables (SARIMAX) are well-established and effective in capturing these patterns, providing interpretable results. Deep-learning algorithms require more data for training, which may be limited in certain logistics scenarios. In such cases, traditional models like SARIMAX, ARIMA, and AR can still deliver reliable predictions with fewer data points. Deep-learning models like LSTM can capture complex patterns but lack interpretability, which is crucial in the logistics industry. Balancing performance and practicality, our study combined SARIMAX, ARIMA, AR, and Long Short-Term Memory (LSTM) models to provide a comprehensive analysis and insights into predicting order volume in logistics companies. A real dataset from an international shipping company, consisting of the number of orders during specific time periods, was used to generate a comprehensive time-series dataset. Additionally, new features such as holidays, off days, and sales seasons were incorporated into the dataset to assess their impact on order forecasting and workforce demands. The paper compares the performance of the four different time-series analysis methods in predicting order trends for three countries: United Arab Emirates (UAE), Kingdom of Saudi Arabia (KSA), and Kuwait (KWT), as well as across all countries. By analyzing the data and applying the SARIMAX, ARIMA, LSTM, and AR models to predict future order volume and trends, it was found that the SARIMAX model outperformed the other methods. The SARIMAX model demonstrated superior accuracy in predicting order volumes and trends in the UAE (MAPE: 0.097, RMSE: 0.134), KSA (MAPE: 0.158, RMSE: 0.199), and KWT (MAPE: 0.137, RMSE: 0.215).

Full article

(This article belongs to the Special Issue Computational Social Science and Complex Systems)

►▼

Show Figures

Figure 1

Open AccessArticle

Algebraic Structures Induced by the Insertion and Detection of Malware

Computation 2023, 11(7), 140; https://doi.org/10.3390/computation11070140 - 11 Jul 2023

Abstract

►▼

Show Figures

Since its introduction, researching malware has had two main goals. On the one hand, malware writers have been focused on developing software that can cause more damage to a targeted host for as long as possible. On the other hand, malware analysts have

[...] Read more.

Since its introduction, researching malware has had two main goals. On the one hand, malware writers have been focused on developing software that can cause more damage to a targeted host for as long as possible. On the other hand, malware analysts have as one of their main purposes the development of tools such as malware detection systems (MDS) or network intrusion detection systems (NIDS) to prevent and detect possible threats to the informatic systems. Obfuscation techniques, such as the encryption of the virus’s code lines, have been developed to avoid their detection. In contrast, shallow machine learning and deep learning algorithms have recently been introduced to detect them. This paper is devoted to some theoretical implications derived from these investigations. We prove that hidden algebraic structures as equipped posets and their categories of representations are behind the research of some infections. Properties of these categories are given to provide a better understanding of different infection techniques.

Full article

Figure 1

Open AccessArticle

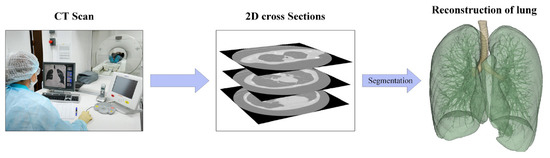

Incremental Learning-Based Algorithm for Anomaly Detection Using Computed Tomography Data

Computation 2023, 11(7), 139; https://doi.org/10.3390/computation11070139 - 10 Jul 2023

Abstract

►▼

Show Figures

In a nuclear power plant (NPP), the used tools are visually inspected to ensure their integrity before and after their use in the nuclear reactor. The manual inspection is usually performed by qualified technicians and takes a large amount of time (weeks up

[...] Read more.

In a nuclear power plant (NPP), the used tools are visually inspected to ensure their integrity before and after their use in the nuclear reactor. The manual inspection is usually performed by qualified technicians and takes a large amount of time (weeks up to months). In this work, we propose an automated tool inspection that uses a classification model for anomaly detection. The deep learning model classifies the computed tomography (CT) images as defective (with missing components) or defect-free. Moreover, the proposed algorithm enables incremental learning (IL) using a proposed thresholding technique to ensure a high prediction confidence by continuous online training of the deployed online anomaly detection model. The proposed algorithm is tested with existing state-of-the-art IL methods showing that it helps the model quickly learn the anomaly patterns. In addition, it enhances the classification model confidence while preserving a desired minimal performance.

Full article

Figure 1

Open AccessArticle

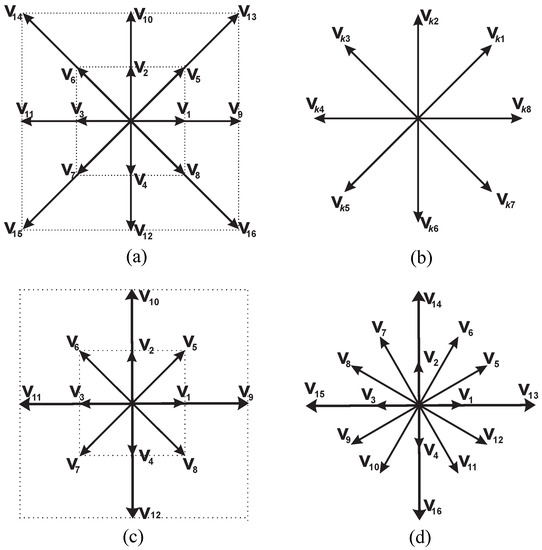

Analysis of Discrete Velocity Models for Lattice Boltzmann Simulations of Compressible Flows at Arbitrary Specific Heat Ratio

Computation 2023, 11(7), 138; https://doi.org/10.3390/computation11070138 - 10 Jul 2023

Abstract

This paper is devoted to the comparison of discrete velocity models used for simulation of compressible flows with arbitrary specific heat ratios in the lattice Boltzmann method. The stability of the governing equations is analyzed for the steady flow regime. A technique for

[...] Read more.

This paper is devoted to the comparison of discrete velocity models used for simulation of compressible flows with arbitrary specific heat ratios in the lattice Boltzmann method. The stability of the governing equations is analyzed for the steady flow regime. A technique for the construction of stability domains in parametric space based on the analysis of eigenvalues is proposed. A comparison of stability domains for different models is performed. It is demonstrated that the maximum value of macrovelocity, which defines instability initiation, is dependent on the values of relaxation time, and plots of this dependence are constructed. For double-distribution-function models, it is demonstrated that the value of the Prantdl number does not seriously affect stability. The off-lattice parametric finite-difference scheme is proposed for the practical realization of the considered kinetic models. The Riemann problems and the problem of Kelvin–Helmholtz instability simulation are numerically solved. It is demonstrated that different models lead to close numerical results. The proposed technique of stability investigation can be used as an effective tool for the theoretical comparison of different kinetic models used in applications of the lattice Boltzmann method.

Full article

(This article belongs to the Special Issue Computational Techniques for Fluid Dynamics Problems)

►▼

Show Figures

Figure 1

Open AccessArticle

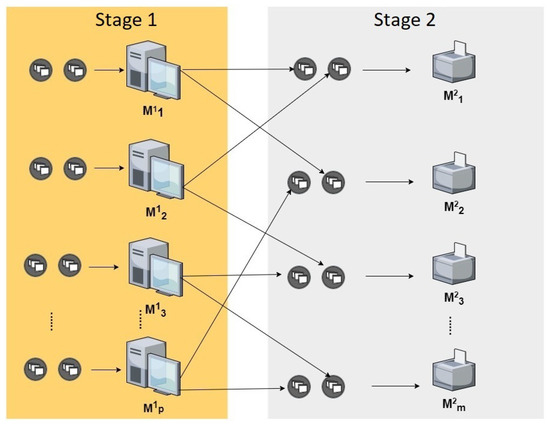

Makespan Minimization for the Two-Stage Hybrid Flow Shop Problem with Dedicated Machines: A Comprehensive Study of Exact and Heuristic Approaches

Computation 2023, 11(7), 137; https://doi.org/10.3390/computation11070137 - 10 Jul 2023

Abstract

This paper presents a comprehensive approach for minimizing makespan in the challenging two-stage hybrid flowshop with dedicated machines, a problem known to be strongly NP-hard. This study proposed a constraint programming approach, a novel heuristic based on a priority rule, and Tabu search

[...] Read more.

This paper presents a comprehensive approach for minimizing makespan in the challenging two-stage hybrid flowshop with dedicated machines, a problem known to be strongly NP-hard. This study proposed a constraint programming approach, a novel heuristic based on a priority rule, and Tabu search procedures to tackle this optimization problem. The constraint programming model, implemented using a commercial solver, serves as the exact resolution method, while the heuristic and Tabu search explore approximate solutions simultaneously. The motivation behind this research is the need to address the complexities of scheduling problems in the context of two-stage hybrid flowshop with dedicated machines. This problem presents significant challenges due to its NP-hard nature and the need for efficient optimization techniques. The contribution of this study lies in the development of an integrated approach that combines constraint programming, a novel heuristic, and Tabu search to provide a comprehensive and efficient solution. The proposed constraint programming model offers exact resolution capabilities, while the heuristic and Tabu search provide approximate solutions, offering a balance between accuracy and efficiency. To enhance the search process, the research introduces effective elimination rules, which reduce the search space and simplify the search effort. This approach improves the overall optimization performance and contributes to finding high-quality solutions. The results demonstrate the effectiveness of the proposed approach. The heuristic approach achieves complete success in solving all instances for specific classes, showcasing its practical applicability. Furthermore, the constraint programming model exhibits exceptional efficiency, successfully solving problems with up to

(This article belongs to the Section Computational Engineering)

►▼

Show Figures

Figure 1

Open AccessReview

Mathematical and Computer Modeling as a Novel Approach for the Accelerated Development of New Inhalation and Intranasal Drug Delivery Systems

Computation 2023, 11(7), 136; https://doi.org/10.3390/computation11070136 - 09 Jul 2023

Abstract

►▼

Show Figures

This paper presents modern methods of mathematical modeling, which are widely used in the development of new inhalation and intranasal drugs, including those necessary for the treatment of socially significant diseases, which include: tuberculosis, bronchial asthma, and mental and behavioral disorders. Based on

[...] Read more.

This paper presents modern methods of mathematical modeling, which are widely used in the development of new inhalation and intranasal drugs, including those necessary for the treatment of socially significant diseases, which include: tuberculosis, bronchial asthma, and mental and behavioral disorders. Based on the conducted studies, it was revealed that the methods of mathematical modeling used in the development of drugs are fragmented, and there is no single approach that would combine the existing methods. The results presented in the work should contribute to the development of a unified multiscale model as a new approach in mathematical modeling that contributes to the accelerated development and introduction to the market of new drugs with high bioavailability and the required therapeutic efficacy.

Full article

Figure 1

Journal Menu

► ▼ Journal Menu-

- Computation Home

- Aims & Scope

- Editorial Board

- Topical Advisory Panel

- Instructions for Authors

- Special Issues

- Topics

- Sections

- Article Processing Charge

- Indexing & Archiving

- Most Cited & Viewed

- Journal Statistics

- Journal History

- Journal Awards

- Conferences

- Editorial Office

- 10th Anniversary of Computation

Journal Browser

► ▼ Journal BrowserHighly Accessed Articles

Latest Books

E-Mail Alert

News

31 July 2023

MDPI’s 2022 Best PhD Thesis Awards in Computer Science and Mathematics—Winners Announced

MDPI’s 2022 Best PhD Thesis Awards in Computer Science and Mathematics—Winners Announced

31 July 2023

MDPI’s 2022 Young Investigator Awards in Computer Science and Mathematics—Winners Announced

MDPI’s 2022 Young Investigator Awards in Computer Science and Mathematics—Winners Announced

Topics

Topic in

Axioms, Computation, Dynamics, Mathematics, Symmetry

Structural Stability and Dynamics: Theory and Applications

Topic Editors: Harekrushna Behera, Chia-Cheng Tsai, Jen-Yi ChangDeadline: 30 September 2023

Topic in

Entropy, Algorithms, Computation, MAKE, Energies, Materials

Artificial Intelligence and Computational Methods: Modeling, Simulations and Optimization of Complex Systems

Topic Editors: Jaroslaw Krzywanski, Yunfei Gao, Marcin Sosnowski, Karolina Grabowska, Dorian Skrobek, Ghulam Moeen Uddin, Anna Kulakowska, Anna Zylka, Bachil El FilDeadline: 20 October 2023

Topic in

Applied Sciences, BioMedInformatics, BioTech, Genes, Computation

Computational Intelligence and Bioinformatics (CIB)

Topic Editors: Marco Mesiti, Giorgio Valentini, Elena Casiraghi, Tiffany J. CallahanDeadline: 31 October 2023

Topic in

Entropy, Fractal Fract, Dynamics, Mathematics, Computation, Axioms

Advances in Nonlinear Dynamics: Methods and Applications

Topic Editors: Ravi P. Agarwal, Maria Alessandra RagusaDeadline: 20 November 2023

Conferences

Special Issues

Special Issue in

Computation

Applications of Statistics and Machine Learning in Electronics

Guest Editors: Stefan Hensel, Marin B. Marinov, Malinka Ivanova, Maya Dimitrova, Hiroaki WagatsumaDeadline: 31 August 2023

Special Issue in

Computation

Solstice 2023—International Conference on Discrete Models of Complex Systems

Guest Editors: Franco Bagnoli, Anna T. LawniczakDeadline: 15 September 2023

Special Issue in

Computation

Applications of Evolutionary Computation: Past Success and Future Challenges

Guest Editor: Alexandros TzanetosDeadline: 30 September 2023

Special Issue in

Computation

Mathematical Modeling and Study of Nonlinear Dynamic Processes

Guest Editor: Alexander PchelintsevDeadline: 31 October 2023