-

Boosting the Learning for Ranking Patterns

Boosting the Learning for Ranking Patterns -

Machine-Learning-Based Model for Hurricane Storm Surge Forecasting in the Lower Laguna Madre

Machine-Learning-Based Model for Hurricane Storm Surge Forecasting in the Lower Laguna Madre -

Detection of Plausibility and Error Reasons in Finite Element Simulations with Deep Learning Networks

Detection of Plausibility and Error Reasons in Finite Element Simulations with Deep Learning Networks -

Unsupervised Cyclic Siamese Networks Automating Cell Imagery Analysis

Unsupervised Cyclic Siamese Networks Automating Cell Imagery Analysis

Journal Description

Algorithms

Algorithms

is a peer-reviewed, open access journal which provides an advanced forum for studies related to algorithms and their applications. Algorithms is published monthly online by MDPI. The European Society for Fuzzy Logic and Technology (EUSFLAT) is affiliated with Algorithms and their members receive discounts on the article processing charges.

- Open Access — free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), Ei Compendex, MathSciNet and other databases.

- Journal Rank: CiteScore - Q2 (Numerical Analysis)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 19.1 days after submission; acceptance to publication is undertaken in 3.4 days (median values for papers published in this journal in the first half of 2023).

- Testimonials: See what our editors and authors say about Algorithms.

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Impact Factor:

2.3 (2022);

5-Year Impact Factor:

2.2 (2022)

Latest Articles

Learning to Extrapolate Using Continued Fractions: Predicting the Critical Temperature of Superconductor Materials

Algorithms 2023, 16(8), 382; https://doi.org/10.3390/a16080382 - 08 Aug 2023

Abstract

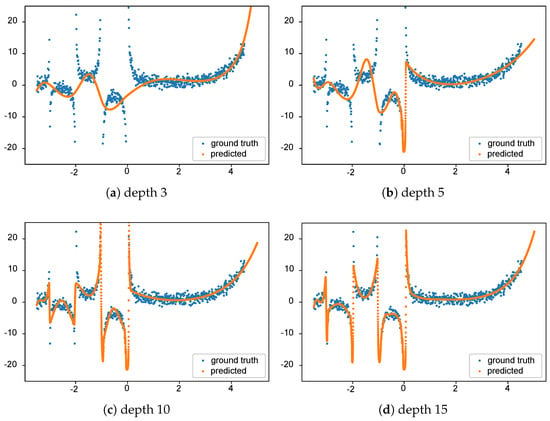

In the field of Artificial Intelligence (AI) and Machine Learning (ML), a common objective is the approximation of unknown target functions

In the field of Artificial Intelligence (AI) and Machine Learning (ML), a common objective is the approximation of unknown target functions

(This article belongs to the Special Issue Machine Learning Algorithms and Methods for Predictive Analytics)

►

Show Figures

Open AccessReview

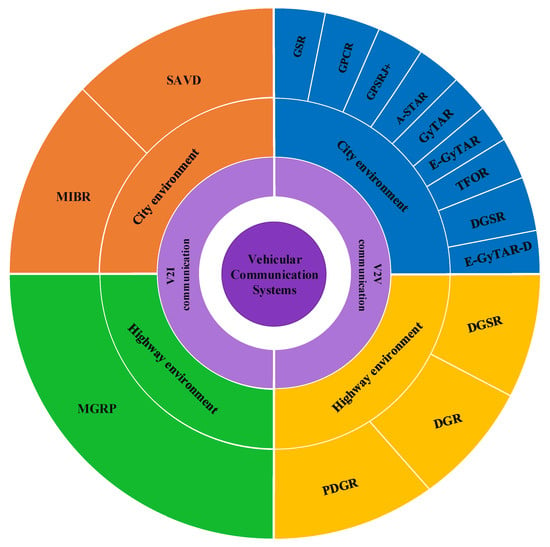

Investigating Routing in the VANET Network: Review and Classification of Approaches

Algorithms 2023, 16(8), 381; https://doi.org/10.3390/a16080381 - 07 Aug 2023

Abstract

Vehicular Ad Hoc Network (VANETs) need methods to control traffic caused by a high volume of traffic during day and night, the interaction of vehicles, and pedestrians, vehicle collisions, increasing travel delays, and energy issues. Routing is one of the most critical problems

[...] Read more.

Vehicular Ad Hoc Network (VANETs) need methods to control traffic caused by a high volume of traffic during day and night, the interaction of vehicles, and pedestrians, vehicle collisions, increasing travel delays, and energy issues. Routing is one of the most critical problems in VANET. One of the machine learning categories is reinforcement learning (RL), which uses RL algorithms to find a more optimal path. According to the feedback they get from the environment, these methods can affect the system through learning from previous actions and reactions. This paper provides a comprehensive review of various methods such as reinforcement learning, deep reinforcement learning, and fuzzy learning in the traffic network, to obtain the best method for finding optimal routing in the VANET network. In fact, this paper deals with the advantages, disadvantages and performance of the methods introduced. Finally, we categorize the investigated methods and suggest the proper performance of each of them.

Full article

(This article belongs to the Collection Featured Reviews of Algorithms)

►▼

Show Figures

Figure 1

Open AccessArticle

Design Optimization of Truss Structures Using a Graph Neural Network-Based Surrogate Model

Algorithms 2023, 16(8), 380; https://doi.org/10.3390/a16080380 - 07 Aug 2023

Abstract

One of the primary objectives of truss structure design optimization is to minimize the total weight by determining the optimal sizes of the truss members while ensuring structural stability and integrity against external loads. Trusses consist of pin joints connected by straight members,

[...] Read more.

One of the primary objectives of truss structure design optimization is to minimize the total weight by determining the optimal sizes of the truss members while ensuring structural stability and integrity against external loads. Trusses consist of pin joints connected by straight members, analogous to vertices and edges in a mathematical graph. This characteristic motivates the idea of representing truss joints and members as graph vertices and edges. In this study, a Graph Neural Network (GNN) is employed to exploit the benefits of graph representation and develop a GNN-based surrogate model integrated with a Particle Swarm Optimization (PSO) algorithm to approximate nodal displacements of trusses during the design optimization process. This approach enables the determination of the optimal cross-sectional areas of the truss members with fewer finite element model (FEM) analyses. The validity and effectiveness of the GNN-based optimization technique are assessed by comparing its results with those of a conventional FEM-based design optimization of three truss structures: a 10-bar planar truss, a 72-bar space truss, and a 200-bar planar truss. The results demonstrate the superiority of the GNN-based optimization, which can achieve the optimal solutions without violating constraints and at a faster rate, particularly for complex truss structures like the 200-bar planar truss problem.

Full article

(This article belongs to the Special Issue Deep Neural Networks and Optimization Algorithms)

►▼

Show Figures

Figure 1

Open AccessArticle

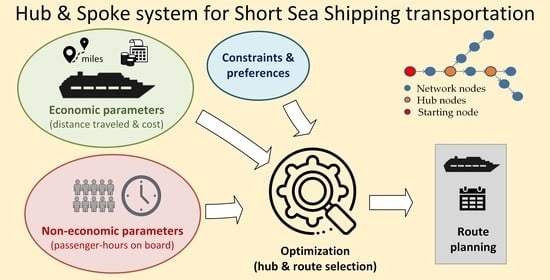

A Multi-Objective Tri-Level Algorithm for Hub-and-Spoke Network in Short Sea Shipping Transportation

Algorithms 2023, 16(8), 379; https://doi.org/10.3390/a16080379 - 07 Aug 2023

Abstract

Hub-and-Spoke (H&S) network modeling is a form of transport topology optimization in which network joins are connected through intermediate hub nodes. The Short Sea Shipping (SSS) problem aims to efficiently disperse passenger flows involving multiple vessel routes and intermediary hubs through which passengers

[...] Read more.

Hub-and-Spoke (H&S) network modeling is a form of transport topology optimization in which network joins are connected through intermediate hub nodes. The Short Sea Shipping (SSS) problem aims to efficiently disperse passenger flows involving multiple vessel routes and intermediary hubs through which passengers are transferred to their final destination. The problem contains elements of the Hub-and-Spoke and Travelling Salesman, with different levels of passenger flows among islands, making it more demanding than the typical H&S one, as the hub selection within nodes and the shortest routes among islands are internal optimization goals. This work introduces a multi-objective tri-level optimization algorithm for the General Network of Short Sea Shipping (GNSSS) problem to reduce travel distances and transportation costs while improving travel quality and user satisfaction, mainly by minimizing passenger hours spent on board. The analysis is performed at three levels of decisions: (a) the hub node assignment, (b) the island-to-line assignment, and (c) the island service sequence within each line. Due to the magnitude and complexity of the problem, a genetic algorithm is employed for the implementation. The algorithm performance has been tested and evaluated through several real and simulated case studies of different sizes and operational scenarios. The results indicate that the algorithm provides rational solutions in accordance with the desired sub-objectives. The multi-objective consideration leads to solutions that are quite scattered in the solution space, indicating the necessity of employing formal optimization methods. Typical Pareto diagrams present non-dominated solutions varying at a range of 30 percent in terms of the total distance traveled and more than 50 percent in relation to the cumulative passenger hours. Evaluation results further indicate satisfactory algorithm performance in terms of result stability (repeatability) and computational time requirements. In conclusion, the work provides a tool for assisting network operation and transport planning decisions by shipping companies in the directions of cost reduction and traveler service upgrade. In addition, the model can be adapted to other applications in transportation and in the supply chain.

Full article

(This article belongs to the Special Issue Optimization Algorithms for Decision Support Systems)

►▼

Show Figures

Graphical abstract

Open AccessReview

An Overview of Privacy Dimensions on the Industrial Internet of Things (IIoT)

Algorithms 2023, 16(8), 378; https://doi.org/10.3390/a16080378 - 06 Aug 2023

Abstract

The rapid advancements in technology have given rise to groundbreaking solutions and practical applications in the field of the Industrial Internet of Things (IIoT). These advancements have had a profound impact on the structures of numerous industrial organizations. The IIoT, a seamless integration

[...] Read more.

The rapid advancements in technology have given rise to groundbreaking solutions and practical applications in the field of the Industrial Internet of Things (IIoT). These advancements have had a profound impact on the structures of numerous industrial organizations. The IIoT, a seamless integration of the physical and digital realms with minimal human intervention, has ushered in radical changes in the economy and modern business practices. At the heart of the IIoT lies its ability to gather and analyze vast volumes of data, which is then harnessed by artificial intelligence systems to perform intelligent tasks such as optimizing networked units’ performance, identifying and correcting errors, and implementing proactive maintenance measures. However, implementing IIoT systems is fraught with difficulties, notably in terms of security and privacy. IIoT implementations are susceptible to sophisticated security attacks at various levels of networking and communication architecture. The complex and often heterogeneous nature of these systems makes it difficult to ensure availability, confidentiality, and integrity, raising concerns about mistrust in network operations, privacy breaches, and potential loss of critical, personal, and sensitive information of the network's end-users. To address these issues, this study aims to investigate the privacy requirements of an IIoT ecosystem as outlined by industry standards. It provides a comprehensive overview of the IIoT, its advantages, disadvantages, challenges, and the imperative need for industrial privacy. The research methodology encompasses a thorough literature review to gather existing knowledge and insights on the subject. Additionally, it explores how the IIoT is transforming the manufacturing industry and enhancing industrial processes, incorporating case studies and real-world examples to illustrate its practical applications and impact. Also, the research endeavors to offer actionable recommendations on implementing privacy-enhancing measures and establishing a secure IIoT ecosystem.

Full article

(This article belongs to the Special Issue Computational Intelligence in Wireless Sensor Networks and IoT)

►▼

Show Figures

Figure 1

Open AccessArticle

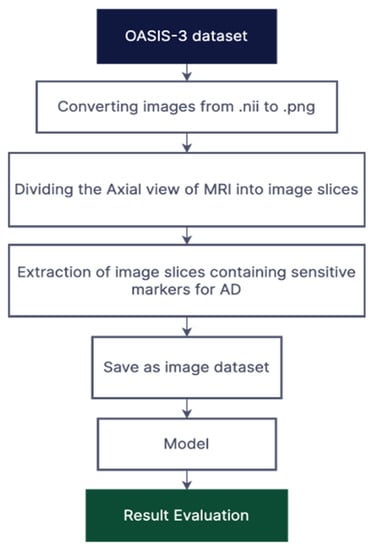

Ensemble Transfer Learning for Distinguishing Cognitively Normal and Mild Cognitive Impairment Patients Using MRI

Algorithms 2023, 16(8), 377; https://doi.org/10.3390/a16080377 - 06 Aug 2023

Abstract

Alzheimer’s disease is a chronic neurodegenerative disease that causes brain cells to degenerate, resulting in decreased physical and mental abilities and, in severe cases, permanent memory loss. It is considered as the most common and fatal form of dementia. Although mild cognitive impairment

[...] Read more.

Alzheimer’s disease is a chronic neurodegenerative disease that causes brain cells to degenerate, resulting in decreased physical and mental abilities and, in severe cases, permanent memory loss. It is considered as the most common and fatal form of dementia. Although mild cognitive impairment (MCI) precedes Alzheimer’s disease (AD), it does not necessarily show the obvious symptoms of AD. As a result, it becomes challenging to distinguish between mild cognitive impairment and cognitively normal. In this paper, we propose an ensemble of deep learners based on convolutional neural networks for the early diagnosis of Alzheimer’s disease. The proposed approach utilises simple averaging ensemble and weighted averaging ensemble methods. The ensemble-based transfer learning model demonstrates enhanced generalization and performance for AD diagnosis compared to traditional transfer learning methods. Extensive experiments on the OASIS-3 dataset validate the effectiveness of the proposed model, showcasing its superiority over state-of-the-art transfer learning approaches in terms of accuracy, robustness, and efficiency.

Full article

(This article belongs to the Collection Feature Papers in Algorithms and Mathematical Models for Computer-Assisted Diagnostic Systems)

►▼

Show Figures

Figure 1

Open AccessArticle

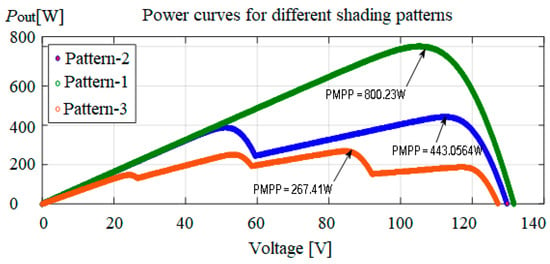

Comparison of Meta-Heuristic Optimization Algorithms for Global Maximum Power Point Tracking of Partially Shaded Solar Photovoltaic Systems

Algorithms 2023, 16(8), 376; https://doi.org/10.3390/a16080376 - 05 Aug 2023

Abstract

Partial shading conditions lead to power mismatches among photovoltaic (PV) panels, resulting in the generation of multiple peak power points on the P-V curve. At this point, conventional MPPT algorithms fail to operate effectively. This research work mainly focuses on the exploration of

[...] Read more.

Partial shading conditions lead to power mismatches among photovoltaic (PV) panels, resulting in the generation of multiple peak power points on the P-V curve. At this point, conventional MPPT algorithms fail to operate effectively. This research work mainly focuses on the exploration of performance optimization and harnessing more power during the partial shading environment of solar PV systems with a single-objective non-linear optimization problem subjected to different operations formulated and solved using recent metaheuristic algorithms such as Cat Swarm Optimization (CSO), Grey Wolf Optimization (GWO) and the proposed Chimp Optimization algorithm (ChOA). This research work is implemented on a test system with the help of MATLAB/SIMULINK, and the obtained results are discussed. From the overall results, the metaheuristic methods used by the trackers based on their analysis showed convergence towards the global Maximum Power Point (MPP). Additionally, the proposed ChOA technique shows improved performance over other existing algorithms.

Full article

(This article belongs to the Special Issue Metaheuristic Algorithms in Optimal Design of Engineering Problems)

►▼

Show Figures

Figure 1

Open AccessArticle

Ascertaining the Ideality of Photometric Stereo Datasets under Unknown Lighting

Algorithms 2023, 16(8), 375; https://doi.org/10.3390/a16080375 - 05 Aug 2023

Abstract

The standard photometric stereo model makes several assumptions that are rarely verified in experimental datasets. In particular, the observed object should behave as a Lambertian reflector, and the light sources should be positioned at an infinite distance from it, along a known direction.

[...] Read more.

The standard photometric stereo model makes several assumptions that are rarely verified in experimental datasets. In particular, the observed object should behave as a Lambertian reflector, and the light sources should be positioned at an infinite distance from it, along a known direction. Even when Lambert’s law is approximately fulfilled, an accurate assessment of the relative position between the light source and the target is often unavailable in real situations. The Hayakawa procedure is a computational method for estimating such information directly from data images. It occasionally breaks down when some of the available images excessively deviate from ideality. This is generally due to observing a non-Lambertian surface, or illuminating it from a close distance, or both. Indeed, in narrow shooting scenarios, typical, e.g., of archaeological excavation sites, it is impossible to position a flashlight at a sufficient distance from the observed surface. It is then necessary to understand if a given dataset is reliable and which images should be selected to better reconstruct the target. In this paper, we propose some algorithms to perform this task and explore their effectiveness.

Full article

(This article belongs to the Special Issue Recent Advances in Algorithms for Computer Vision Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

A Greedy Pursuit Hierarchical Iteration Algorithm for Multi-Input Systems with Colored Noise and Unknown Time-Delays

by

and

Algorithms 2023, 16(8), 374; https://doi.org/10.3390/a16080374 - 04 Aug 2023

Abstract

This paper focuses on the joint estimation of parameters and time delays for multi-input systems that contain unknown input delays and colored noise. A greedy pursuit hierarchical iteration algorithm is proposed, which can reduce the estimation cost. Firstly, an over-parameterized approach is employed

[...] Read more.

This paper focuses on the joint estimation of parameters and time delays for multi-input systems that contain unknown input delays and colored noise. A greedy pursuit hierarchical iteration algorithm is proposed, which can reduce the estimation cost. Firstly, an over-parameterized approach is employed to construct a sparse system model of multi-input systems even in the absence of prior knowledge of time delays. Secondly, the hierarchical principle is applied to replace the unknown true noise items with their estimation values, and a greedy pursuit search based on compressed sensing is employed to find key parameters using limited sampled data. The greedy pursuit search can effectively reduce the scale of the system model and improve the identification efficiency. Then, the parameters and time delays can be estimated simultaneously while considering the known orders and found locations of key parameters by utilizing iterative methods with limited sampled data. Finally, some simulations are provided to illustrate the effectiveness of the presented algorithm in this paper.

Full article

(This article belongs to the Section Algorithms and Mathematical Models for Computer-Assisted Diagnostic Systems)

►▼

Show Figures

Figure 1

Open AccessArticle

Data-Driven Deployment of Cargo Drones: A U.S. Case Study Identifying Key Markets and Routes

Algorithms 2023, 16(8), 373; https://doi.org/10.3390/a16080373 - 03 Aug 2023

Abstract

Electric and autonomous aircraft (EAA) are set to disrupt current cargo-shipping models. To maximize the benefits of this technology, investors and logistics managers need information on target commodities, service location establishment, and the distribution of origin–destination pairs within EAA’s range limitations. This research

[...] Read more.

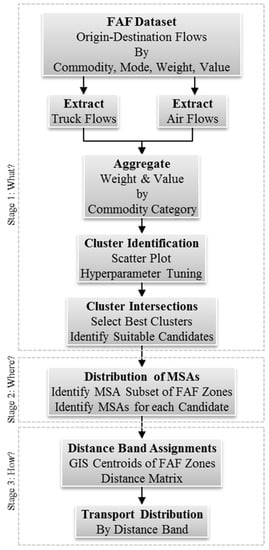

Electric and autonomous aircraft (EAA) are set to disrupt current cargo-shipping models. To maximize the benefits of this technology, investors and logistics managers need information on target commodities, service location establishment, and the distribution of origin–destination pairs within EAA’s range limitations. This research introduces a three-phase data-mining and geographic information system (GIS) algorithm to support data-driven decision-making under uncertainty. Analysts can modify and expand this workflow to scrutinize origin–destination commodity flow datasets representing various locations. The algorithm identifies four commodity categories contributing to more than one-third of the value transported by aircraft across the contiguous United States, yet only 5% of the weight. The workflow highlights 8 out of 129 regional locations that moved more than 20% of the weight of those four commodity categories. A distance band of 400 miles among these eight locations accounts for more than 80% of the transported weight. This study addresses a literature gap, identifying opportunities for supply chain redesign using EAA. The presented methodology can guide planners and investors in identifying prime target markets for emerging EAA technologies using regional datasets.

Full article

(This article belongs to the Special Issue Algorithms and Optimization for Project Management and Supply Chain Management)

►▼

Show Figures

Figure 1

Open AccessArticle

Applying Particle Swarm Optimization Variations to Solve the Transportation Problem Effectively

Algorithms 2023, 16(8), 372; https://doi.org/10.3390/a16080372 - 03 Aug 2023

Abstract

The Transportation Problem (TP) is a special type of linear programming problem, where the objective is to minimize the cost of distributing a product from a number of sources to a number of destinations. Many methods for solving the TP have been studied

[...] Read more.

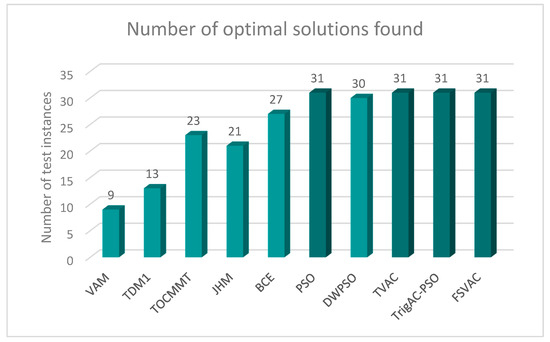

The Transportation Problem (TP) is a special type of linear programming problem, where the objective is to minimize the cost of distributing a product from a number of sources to a number of destinations. Many methods for solving the TP have been studied over time. However, exact methods do not always succeed in finding the optimal solution or a solution that effectively approximates the optimal one. This paper introduces two new variations of the well-established Particle Swarm Optimization (PSO) algorithm named the Trigonometric Acceleration Coefficients-PSO (TrigAc-PSO) and the Four Sectors Varying Acceleration Coefficients PSO (FSVAC-PSO) and applies them to solve the TP. The performances of the proposed variations are examined and validated by carrying out extensive experimental tests. In order to demonstrate the efficiency of the proposed PSO variations, thirty two problems with different sizes have been solved to evaluate and demonstrate their performance. Moreover, the proposed PSO variations were compared with exact methods such as Vogel’s Approximation Method (VAM), the Total Differences Method 1 (TDM1), the Total Opportunity Cost Matrix-Minimal Total (TOCM-MT), the Juman and Hoque Method (JHM) and the Bilqis Chastine Erma method (BCE). Last but not least, the proposed variations were also compared with other PSO variations that are well known for their completeness and efficiency, such as Decreasing Weight Particle Swarm Optimization (DWPSO) and Time Varying Acceleration Coefficients (TVAC). Experimental results show that the proposed variations achieve very satisfactory results in terms of their efficiency and effectiveness compared to existing either exact or heuristic methods.

Full article

(This article belongs to the Special Issue Metaheuristic Algorithms in Optimal Design of Engineering Problems)

►▼

Show Figures

Figure 1

Open AccessArticle

Identification of Mechanical Parameters in Flexible Drive Systems Using Hybrid Particle Swarm Optimization Based on the Quasi-Newton Method

by

and

Algorithms 2023, 16(8), 371; https://doi.org/10.3390/a16080371 - 31 Jul 2023

Abstract

This study presents hybrid particle swarm optimization with quasi-Newton (HPSO-QN), a hybrid optimization method for accurately identifying mechanical parameters in two-mass model (2MM) systems. These systems are commonly used to model and control high-performance electric drive systems with elastic joints, which are prevalent

[...] Read more.

This study presents hybrid particle swarm optimization with quasi-Newton (HPSO-QN), a hybrid optimization method for accurately identifying mechanical parameters in two-mass model (2MM) systems. These systems are commonly used to model and control high-performance electric drive systems with elastic joints, which are prevalent in modern industrial production. The proposed method combines the global exploration capabilities of particle swarm optimization (PSO) with the local exploitation abilities of the quasi-Newton (QN) method to precisely estimate the motor and load inertias, shaft stiffness, and friction coefficients of the 2MM system. By integrating these two optimization techniques, the HPSO-QN method exhibits superior accuracy and performance compared to standard PSO algorithms. Experimental validation using a 2MM system demonstrates the effectiveness of the proposed method in accurately identifying and improving the mechanical parameters of these complex systems. The HPSO-QN method offers significant implications for enhancing the modeling, performance, and stability of 2MM systems and can be extended to other systems with flexible shafts and couplings. This study contributes to the development of accurate and effective parameter identification methods for complex systems, emphasizing the crucial role of precise parameter estimation in achieving optimal control performance and stability.

Full article

(This article belongs to the Special Issue Nature-Inspired Algorithms for Optimization)

►▼

Show Figures

Figure 1

Open AccessArticle

Hardware Suitability of Complex Natural Resonances Extraction Algorithms in Backscattered Radar Signals

by

and

Algorithms 2023, 16(8), 370; https://doi.org/10.3390/a16080370 - 31 Jul 2023

Abstract

Complex natural resonances (CNRs) extraction methods such as matrix pencil method (MPM), Cauchy, vector-fitting Cauchy method (VCM), or Prony’s method decompose a signal in terms of frequency components and damping factors based on Baum’s singularity expansion method (SEM) either in the time or

[...] Read more.

Complex natural resonances (CNRs) extraction methods such as matrix pencil method (MPM), Cauchy, vector-fitting Cauchy method (VCM), or Prony’s method decompose a signal in terms of frequency components and damping factors based on Baum’s singularity expansion method (SEM) either in the time or frequency domain. The validation of these CNRs is accomplished through a reconstruction of the signal based on these complex poles and residues and a comparison with the input signal. Here, we perform quantitative performance metrics in order to have an evaluation of each method’s hardware suitability factor before selecting a hardware platform using benchmark signals, simulations of backscattering scenarios, and experiments.

Full article

(This article belongs to the Special Issue Digital Signal Processing Algorithms and Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

Human Action Representation Learning Using an Attention-Driven Residual 3DCNN Network

by

and

Algorithms 2023, 16(8), 369; https://doi.org/10.3390/a16080369 - 31 Jul 2023

Abstract

The recognition of human activities using vision-based techniques has become a crucial research field in video analytics. Over the last decade, there have been numerous advancements in deep learning algorithms aimed at accurately detecting complex human actions in video streams. While these algorithms

[...] Read more.

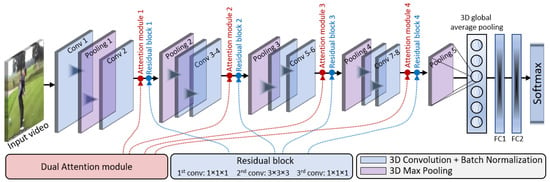

The recognition of human activities using vision-based techniques has become a crucial research field in video analytics. Over the last decade, there have been numerous advancements in deep learning algorithms aimed at accurately detecting complex human actions in video streams. While these algorithms have demonstrated impressive performance in activity recognition, they often exhibit a bias towards either model performance or computational efficiency. This biased trade-off between robustness and efficiency poses challenges when addressing complex human activity recognition problems. To address this issue, this paper presents a computationally efficient yet robust approach, exploiting saliency-aware spatial and temporal features for human action recognition in videos. To achieve effective representation of human actions, we propose an efficient approach called the dual-attentional Residual 3D Convolutional Neural Network (DA-R3DCNN). Our proposed method utilizes a unified channel-spatial attention mechanism, allowing it to efficiently extract significant human-centric features from video frames. By combining dual channel-spatial attention layers with residual 3D convolution layers, the network becomes more discerning in capturing spatial receptive fields containing objects within the feature maps. To assess the effectiveness and robustness of our proposed method, we have conducted extensive experiments on four well-established benchmark datasets for human action recognition. The quantitative results obtained validate the efficiency of our method, showcasing significant improvements in accuracy of up to 11% as compared to state-of-the-art human action recognition methods. Additionally, our evaluation of inference time reveals that the proposed method achieves up to a 74× improvement in frames per second (FPS) compared to existing approaches, thus showing the suitability and effectiveness of the proposed DA-R3DCNN for real-time human activity recognition.

Full article

(This article belongs to the Special Issue Algorithms for Image Processing and Machine Vision)

►▼

Show Figures

Figure 1

Open AccessEditorial

Special Issue “Algorithms for Feature Selection”

Algorithms 2023, 16(8), 368; https://doi.org/10.3390/a16080368 - 31 Jul 2023

Abstract

This Special Issue of the open access journal Algorithms is dedicated to showcasing cutting-edge research in algorithms for feature selection [...]

Full article

(This article belongs to the Special Issue Algorithms for Feature Selection)

Open AccessArticle

Design and Development of Energy Efficient Algorithm for Smart Beekeeping Device to Device Communication Based on Data Aggregation Techniques

Algorithms 2023, 16(8), 367; https://doi.org/10.3390/a16080367 - 30 Jul 2023

Abstract

Bees, like other insects, indirectly contribute to job creation, food security, and poverty reduction. However, across many parts of the world, bee populations are in decline, affecting crop yields due to reduced pollination and ultimately impacting human nutrition. Technology holds promise for countering

[...] Read more.

Bees, like other insects, indirectly contribute to job creation, food security, and poverty reduction. However, across many parts of the world, bee populations are in decline, affecting crop yields due to reduced pollination and ultimately impacting human nutrition. Technology holds promise for countering the impacts of human activities and climatic change on bees’ survival and honey production. However, considering that smart beekeeping activities mostly operate in remote areas where the use of grid power is inaccessible and the use of batteries to power is not feasible, there is thus a need for such systems to be energy efficient. This work explores the integration of device-to-device communication with 5G technology as a solution to overcome the energy and throughput concerns in smart beekeeping technology. Mobile-based device-to-device communication facilitates devices to communicate directly without the need of immediate infrastructure. This type of communication offers advantages in terms of delay reduction, increased throughput, and reduced energy consumption. The faster data transmission capabilities and low-power modes of 5G networks would significantly enhance the energy efficiency during the system’s idle or standby states. Additionally, the paper analyzes the application of both the discovery and communication services offered by 5G in device-to-device-based smart bee farming. A novel, energy-efficient algorithm for smart beekeeping was developed using data integration and data scheduling and its performance was compared to existing algorithms. The simulation results demonstrated that the proposed smart beekeeping device-to-device communication with data integration guarantees a good quality of service while enhancing energy efficiency.

Full article

(This article belongs to the Section Combinatorial Optimization, Graph, and Network Algorithms)

►▼

Show Figures

Figure 1

Open AccessArticle

Machine-Learning Techniques for Predicting Phishing Attacks in Blockchain Networks: A Comparative Study

by

, , , , , and

Algorithms 2023, 16(8), 366; https://doi.org/10.3390/a16080366 - 29 Jul 2023

Abstract

Security in the blockchain has become a topic of concern because of the recent developments in the field. One of the most common cyberattacks is the so-called phishing attack, wherein the attacker tricks the miner into adding a malicious block to the chain

[...] Read more.

Security in the blockchain has become a topic of concern because of the recent developments in the field. One of the most common cyberattacks is the so-called phishing attack, wherein the attacker tricks the miner into adding a malicious block to the chain under genuine conditions to avoid detection and potentially destroy the entire blockchain. The current attempts at detection include the consensus protocol; however, it fails when a genuine miner tries to add a new block to the blockchain. Zero-trust policies have started making the rounds in the field as they ensure the complete detection of phishing attempts; however, they are still in the process of deployment, which may take a significant amount of time. A more accurate measure of phishing detection involves machine-learning models that use specific features to automate the entire process of classifying an attempt as either a phishing attempt or a safe attempt. This paper highlights several models that may give safe results and help eradicate blockchain phishing attempts.

Full article

(This article belongs to the Special Issue Deep Learning Techniques for Computer Security Problems)

►▼

Show Figures

Figure 1

Open AccessArticle

Evolving Multi-Output Digital Circuits Using Multi-Genome Grammatical Evolution

Algorithms 2023, 16(8), 365; https://doi.org/10.3390/a16080365 - 28 Jul 2023

Abstract

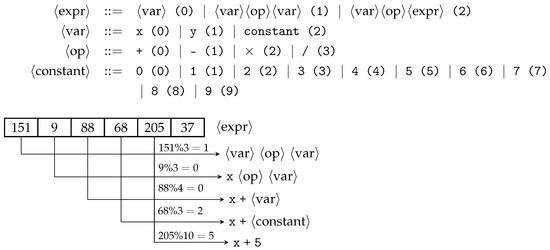

Grammatical Evolution is a Genetic Programming variant which evolves problems in any arbitrary language that is BNF compliant. Since its inception, Grammatical Evolution has been used to solve real-world problems in different domains such as bio-informatics, architecture design, financial modelling, music, software testing,

[...] Read more.

Grammatical Evolution is a Genetic Programming variant which evolves problems in any arbitrary language that is BNF compliant. Since its inception, Grammatical Evolution has been used to solve real-world problems in different domains such as bio-informatics, architecture design, financial modelling, music, software testing, game artificial intelligence and parallel programming. Multi-output problems deal with predicting numerous output variables simultaneously, a notoriously difficult problem. We present a Multi-Genome Grammatical Evolution better suited for tackling multi-output problems, specifically digital circuits. The Multi-Genome consists of multiple genomes, each evolving a solution to a single unique output variable. Each genome is mapped to create its executable object. The mapping mechanism, genetic, selection, and replacement operators have been adapted to make them well-suited for the Multi-Genome representation and the implementation of a new wrapping operator. Additionally, custom grammar syntax rules and a cyclic dependency-checking algorithm have been presented to facilitate the evolution of inter-output dependencies which may exist in multi-output problems. Multi-Genome Grammatical Evolution is tested on combinational digital circuit benchmark problems. Results show Multi-Genome Grammatical Evolution performs significantly better than standard Grammatical Evolution on these benchmark problems.

Full article

(This article belongs to the Special Issue 2022 and 2023 Selected Papers from Algorithms Editorial Board Members)

►▼

Show Figures

Figure 1

Open AccessArticle

An Algorithm for the Fisher Information Matrix of a VARMAX Process

by

and

Algorithms 2023, 16(8), 364; https://doi.org/10.3390/a16080364 - 28 Jul 2023

Abstract

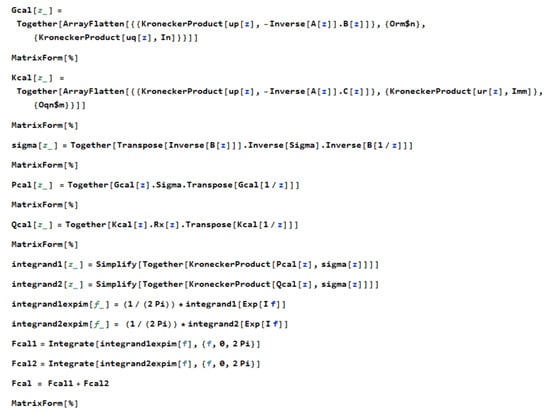

In this paper, an algorithm for Mathematica is proposed for the computation of the asymptotic Fisher information matrix for a multivariate time series, more precisely for a controlled vector autoregressive moving average stationary process, or VARMAX process. Meanwhile, we present briefly several algorithms

[...] Read more.

In this paper, an algorithm for Mathematica is proposed for the computation of the asymptotic Fisher information matrix for a multivariate time series, more precisely for a controlled vector autoregressive moving average stationary process, or VARMAX process. Meanwhile, we present briefly several algorithms published in the literature and discuss the sufficient condition of invertibility of that matrix based on the eigenvalues of the process operators. The results are illustrated by numerical computations.

Full article

(This article belongs to the Collection Feature Papers in Algorithms for Multidisciplinary Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

Model Retraining: Predicting the Likelihood of Financial Inclusion in Kiva’s Peer-to-Peer Lending to Promote Social Impact

by

and

Algorithms 2023, 16(8), 363; https://doi.org/10.3390/a16080363 - 28 Jul 2023

Abstract

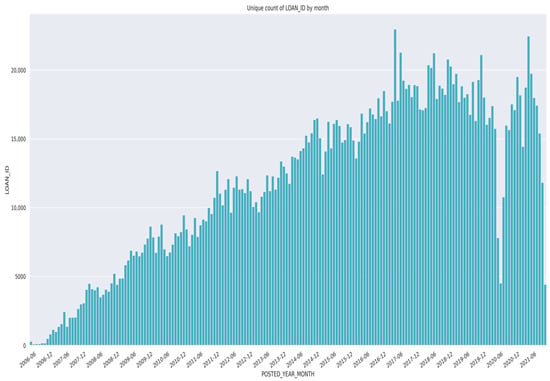

The purpose of this study is to show how machine learning can be leveraged as a tool to govern social impact and drive fair and equitable investments. Many organizations today are establishing financial inclusion goals to promote social impact and have been increasing

[...] Read more.

The purpose of this study is to show how machine learning can be leveraged as a tool to govern social impact and drive fair and equitable investments. Many organizations today are establishing financial inclusion goals to promote social impact and have been increasing their investments in this space. Financial inclusion is the opportunity for individuals and businesses to have access to affordable financial products including loans, credit, and insurance that they may otherwise not have access to with traditional financial institutions. Peer-to-peer (P2P) lending serves as a platform that can support and foster financial inclusion and influence social impact and is becoming more popular today as a resource to underserved communities. Loans issued through P2P lending can fund projects and initiatives focused on climate change, workforce diversity, women’s rights, equity, labor practices, natural resource management, accounting standards, carbon emissions, and several other areas. With this in mind, AI can be a powerful governance tool to help manage risks and promote opportunities for an organization’s financial inclusion goals. In this paper, we explore how AI, specifically machine learning, can help manage the P2P platform Kiva’s investment risks and deliver impact, emphasizing the importance of prediction model retraining to account for regulatory and other changes across the P2P landscape to drive better decision-making. As part of this research, we also explore how changes in important model variables affect aggregate model predictions.

Full article

(This article belongs to the Collection Feature Papers on Artificial Intelligence Algorithms and Their Applications)

►▼

Show Figures

Figure 1

Journal Menu

► ▼ Journal Menu-

- Algorithms Home

- Aims & Scope

- Editorial Board

- Reviewer Board

- Topical Advisory Panel

- Instructions for Authors

- Special Issues

- Topics

- Sections & Collections

- Article Processing Charge

- Indexing & Archiving

- Editor’s Choice Articles

- Most Cited & Viewed

- Journal Statistics

- Journal History

- Journal Awards

- Society Collaborations

- Conferences

- Editorial Office

Journal Browser

► ▼ Journal BrowserHighly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Algorithms, Applied Sciences, Mathematics, Symmetry, AI

Applied Metaheuristic Computing: 2nd Volume

Topic Editors: Peng-Yeng Yin, Ray-I Chang, Jen-Chun LeeDeadline: 31 August 2023

Topic in

Algorithms, Axioms, Fractal Fract, Mathematics, Symmetry

Approximation Theory in Computer and Computational Sciences

Topic Editors: Faruk Özger, Asif Khan, Syed Abdul Mohiuddine, Zeynep Ödemiş ÖzgerDeadline: 20 September 2023

Topic in

Entropy, Algorithms, Computation, MAKE, Energies, Materials

Artificial Intelligence and Computational Methods: Modeling, Simulations and Optimization of Complex Systems

Topic Editors: Jaroslaw Krzywanski, Yunfei Gao, Marcin Sosnowski, Karolina Grabowska, Dorian Skrobek, Ghulam Moeen Uddin, Anna Kulakowska, Anna Zylka, Bachil El FilDeadline: 20 October 2023

Topic in

AI, Algorithms, Applied Sciences, BDCC, MAKE, Sensors

Artificial Intelligence and Fuzzy Systems

Topic Editors: Amelia Zafra, Jose Manuel Soto HidalgoDeadline: 30 November 2023

Conferences

Special Issues

Special Issue in

Algorithms

Artificial Intelligence for Intelligent Systems

Guest Editors: Sathishkumar Easwaramoorthy, B NagarajDeadline: 15 August 2023

Special Issue in

Algorithms

Machine Learning in Medical Signal and Image Processing

Guest Editor: Maryam RavanDeadline: 1 September 2023

Special Issue in

Algorithms

Hybrid Intelligent Algorithms

Guest Editors: Grigorios N. Beligiannis, Efstratios F. Georgopoulos, Spiridon D. Likothanassis, Isidoros Perikos, Ioannis X. TassopoulosDeadline: 15 September 2023

Special Issue in

Algorithms

Algorithms for Smart Cities

Guest Editors: Gloria Cerasela Crisan, Ha Duy Long, Elena NechitaDeadline: 30 September 2023

Topical Collections

Topical Collection in

Algorithms

Feature Papers in Algorithms for Multidisciplinary Applications

Collection Editor: Francesc Pozo

Topical Collection in

Algorithms

Feature Papers in Randomized, Online and Approximation Algorithms

Collection Editor: Frank Werner

Topical Collection in

Algorithms

Featured Reviews of Algorithms

Collection Editors: Arun Kumar Sangaiah, Xingjuan Cai

.jpg)

.JPG)